tecznotes

Michal Migurski's notebook, listening post, and soapbox. Subscribe to ![]() this blog.

Check out the rest of my site as well.

this blog.

Check out the rest of my site as well.

Dec 30, 2010 6:16pm

blog all oft-played tracks II

Like last year, these are some of the tracks I added to iTunes in 2010 and listened to the most, edited for clarity and minor historical whitewashing.

(Here are the MP3s below as an .m3u playlist)

1. Spoon: Got Nuffin

“Got nuffin’ to lose but darkness and shadows.” Most-played track of the year, from the only normal rock band I consistently spend any time with.

2. Jay-Z: Kingdom Come

I didn’t bump into this until August or so reading back into Ta-Nehisi Coates’s archives, but Kingdom Come might be one my favorite songs this year.

It didn’t take long going down the list before I remembered THE best use of Rick James’ “Superfreak”. Nope, not Hammer. The prize goes to my studio neighbor at Stadium Red, Just Blaze. Really, he flipped the fuck out of that sample for Jay Z’s “Kingdom Come”. I had to hear the song a few times in a row just to figure out, as best I could, the arrangement of it all. … Something he nails really well is giving the song a sort of staccato energy that doesn’t exist at all on the original. I love how the bass line goes from being the focus on the original, to being the fill.

I love this idea of taking pieces of music and dressing them up as something entirely different. It’s old-hat to talk about “sampling” like it’s novel but really Kingdom Come just underscores how hard it is to do well, and how frustrating more obviously sample-driven musicians like Girl Talk can be. You listen to All Day, and you might like it, but in end what you’re left with is a dumb bag of samples dressed in their factory uniforms jostling for your attention, strung one after the other. Ten years on, Z-Trip is still a wedding DJ dusting off the Eurythmics for your amusement, while Kingom Come is closer friends to Orbital’s recombination of Tool or Skinny Puppy’s song-length misappropriation of Roman Polanksi vampire movies (mp3).

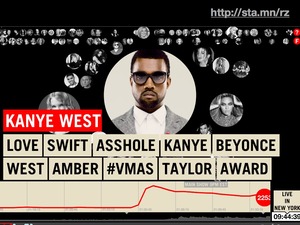

Anyway, the other thing I enjoy about Kingdom Come is the use of the studio as a social instrument. Watch Jay-Z and Kanye West working in the studio together, or read about Kanye’s “Hawaii rap-nerd nirvana” for a sense of how art is produced socially both among its producers and between the record and the listener.

3. Venetian Snares: Szamár Madár

Found via David O’Reilly’s Vimeo page and his gorgeous, spooky video for this track.

4. La Roux: Bulletproof

Another song found via the video, this time watched rather than heard on a phone in a restaurant. I love the clarity of La Roux’s voice, and the clinky/jangly pop production on the track.

5. Jamie Woon: Wayfaring Stranger

Spooky, quiet rendition of the old folk song, found via an installment of Electronic Explorations.

6. Netsky: Young And Foolish

7. Hot Chip: I Feel Better

Hot Chip were one of the few live shows I saw this year, at Oakland’s amazing Fox Theater. The video was directed by Peter Serafinowicz (of Look Around You), who says:

I like the idea of taking something we’re all used to seeing—like a boy band music video—and totally destroying it. So I wrote this proposal and included reference images I found on Google—to illustrate the bald guy in the video played by Ross Lee, I used pictures of Mr. Burns from the “X-Files” episode of “The Simpsons”.

I learned there is a boy band tradition—or possibly an actual physical manual—that says you have to have the tough guy, the cute one, the suave one, the one who takes his top off.

8. Vapour Space: Gravitational Arch Of 10

37.752467, -122.418699, 1996-11-17 07:00:00 is the point in spacetime whenwhere I first heard Gravitational Arch Of 10. The sun was already up, and it was one of those things where you think the music is over and then it all rushes back.

9. Miike Snow: Black And Blue (Savage Skulls Remix)

10. Star Eyes: Ruffage (Side A)

This isn’t really a track so much as a 45-minute long mix of drum n’ bass. The MP3 version finally found me this year (thanks Jeremy) after I burned through or lost three separate copies of the cassette over the past fourteen years.

Dec 21, 2010 11:09pm

winter sabbatical 2010: days twelve through sixteen

These posts have drawn somewhat farther apart; this past week has definitely thrown a wrench in my gears. Eric, Shawn and I do a quarterly full-day Stamen partner meeting, and this time we thought it’d be fun to do it down in Palm Springs to celebrate winter. So, down we went on Tuesday and Wednesday last week. Palm Springs is a strange place that rightfully shouldn’t exist, but we still ate two of our meals at the old folks’ country club tennis resort near our hotel. We also managed to squeeze in a visit to the converted 7-11 carniceria/taqueria in Banning that Gem, George, Michele and I found last year on our trip to Joshua Tree. Off the chain, those tacos are.

As you can imagine this hasn’t been a banner week for productive code, research, or design, though I have managed to push a few things out into the world.

(TL;DR*: Skeletron, Oh Yeah, Paper!, Pinboard username mapper)

The first is that my straight skeleton code from last week has a new public home on Github and a new name, Skeletron. Although quite minimal for now, I’ve taken care to ensure that a bare-bones HTTP interface is built right in. You can try it at skeletron.teczno.com:8206. The mnemonic for the port number is the number of bones in the human body. More on all that in a separate post.

The second is a minimal new blog I’ve started, Oh Yeah, Paper, a link-and-picture dump for interesting paper-related things. My research interest in paper is mostly around communication technologies like Mayo Nissen’s City Tickets and easy print-on-demand services used for custom book printing, but there’s plenty of room for silliness as well. I’ve been posting to this site for a bit over a week now, and didn’t want to say anything until after I’d proved to myself that I could keep up a simple daily posting schedule for a little while. We’ll see how that goes.

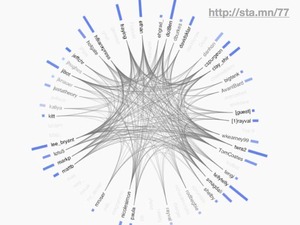

The big news this week is Yahoo!’s incomprehensible shitcanning of venerable bookmark-sharing service Del.icio.us. This is one of those cases where it’s hard to distinguish malice from stupidity, so I’m grateful to Maciej Ceglowski for having started Pinboard last year. I’ve had an account there since the beginning, and a few weeks ago I started noticing a number of people in my network moving house - James, Aaron, Paul, among many other. Henryk Nyh was kind enough to create a username mapper to speed the transition for folks.

One important outcome of this move has been the sudden interest in baking your own. Jeremy Keith suggests a home-grown Delicious, the “self-hosting-with-syndication way of doing things”. This is largely what I’ve been doing with Delicious for a few years now. I keep the primary copy of my bookmarks in Reblog, and when they’re published I push them to Delicious, Twitter, and my own linkblog. Some of those channels get the tags, some get the full description text, and one just whatever short link and title fragment happens to fit in 140 characters. The elephant in this room is that a primary value of Delicious has always been its network feature, which I use as a primary research tool and general way of finding things I should be paying attention to. Marshall Kirkpatrick wrote a bit about how ReadWriteWeb used the network feature to do research, which meshes pretty well with my own approach. Clearly the social bits were important, something that Jeremy talks about in his post. Stephen Hood (former Delicious product manager) makes the excellent suggestion that the Delicious data corpus be donated, perhaps to the Library of Congress or the Smithsonian. This supports the idea that the data there has value as a historical artifact from 2004-2010, and especially well with Twitter’s corresponding donation of public tweets to the LOC. Twenty years down the line, looking at those two datasets in concert is going to lead to some fascinating research.

There have also been a bunch of responses to the problematic idea that it’s possible to bang out your own alternative service, maybe best expressed by Andre Torrez in How To Clone Delicious in 48 Hours:

You may have heard that Delicious is shutting down (or not?). Someone on Twitter suggested that a group of engineers should get together on a weekend and build a Delicious clone. In anticipation of this mystery group of people sitting down and doing this, I thought I'd make a quick todo list for them.

Andre continues with a laundry list of social features like account systems, imports, tags, etc. Jeff Atwood has a similar post on the difficulties of sending email from code. While both of these guys are clearly accomplished developers, I think they’re expressing a little too much stop energy for my comfort. Andre lists a number of “critical” features that Pinboard is cheerfully lacking right now, and Jeff is ignoring the presence of services like AuthSMTP and Spam Arrest offering completely brainless SMTP for not very much money. While you wouldn’t build something huge on top of these services, it’s worth remembering that Delicious started life as a text file, and grew into its present form over time. Things start tiny and develop direction over time as user-constituents suggest ideas or push and pull the service in new directions. There is still a lot of good you can do online with plain old flat files, and even Twitter was able to get a useful, fun service built on quicksand.

Any product that includes a community component is a vector rather than a point: there’s where you started, and the possible gulf between where you think you’re going and where your constituents think you’re going.

Anyway.

Dec 14, 2010 4:52am

winter sabbatical 2010: days eight, nine, ten, and eleven

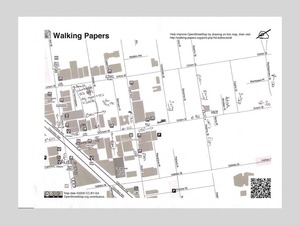

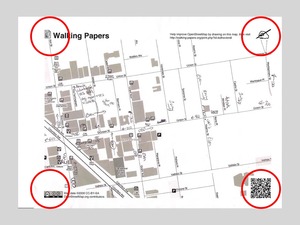

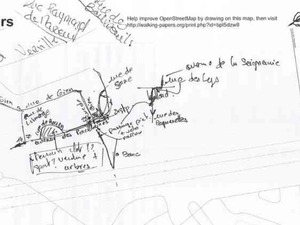

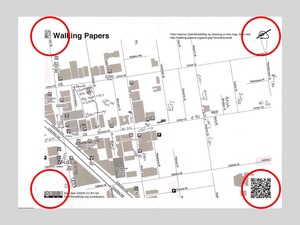

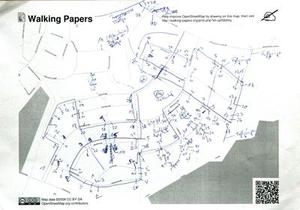

I spent days eight and nine in Chicago. Walking Papers is up in all its crinkly glory at the Chicago Art Institute, in the Hyperlinks exhibit thanks to curator Zöe Ryan. Eric, Geraldine, and intern Martha Pettit arranged a selection of submitted scans over a twelve foot wall. It was bitterly cold, and I thought I’d do a much better job of getting out and about without being quite prepared for the reality of weather. Adrian, Paul, and the gang at Everyblock kindly offered a bit of couch space at their northside office for me to lounge on during day nine, and when I left I saw a tiny rabbit cross my path in the snow. I’m told that’s normal - “lots of friendly squirrels and bunnies in Chicago.”

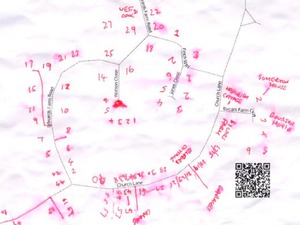

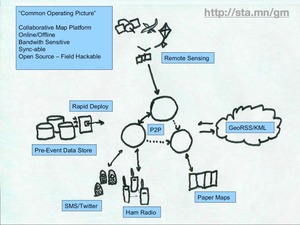

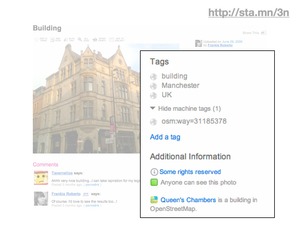

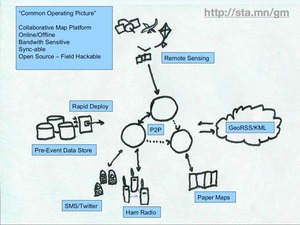

Day ten was travel, and movies, and general laziness. I launched the Atlas feature of Walking Papers, which you can see if you compose a new scan on the front page. You get back a multi-page PDF; nothing special but the possibility of using these to distribute assignments to a group is something I’ve been planning for. Since I last mentioned it, the Random Hacks Of Kindness TaskMeUp project popped onto my radar - it seems to be built around some of the same ideas, aiming for the world of humanitarian and crisis response.

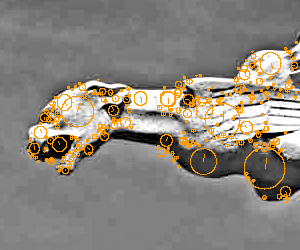

The thing that really ate my head for the past few days has been a computational geometry technique called the straight skeleton. The short story is that it’s a way to simplify polygons down to lines with special applications for cartography. The long story is that I have a personal history of letting what Aaron calls “mathshapes” consume me for days on end, and this is the latest example. Some people have knitting, crossword puzzles, gambling, or crack. I have geometry, and my biggest personal challenge during a fairly open-ended sabbatical like this one has been to ensure that when even whean heading down blind alleys, there’s a plan for sharing the results.

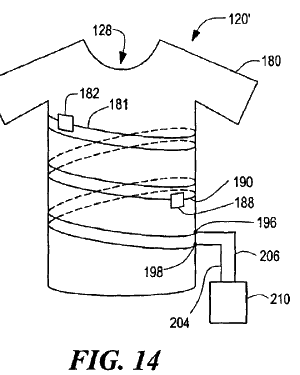

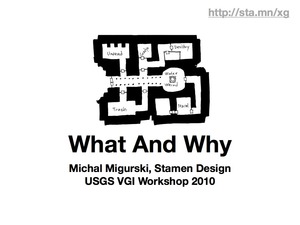

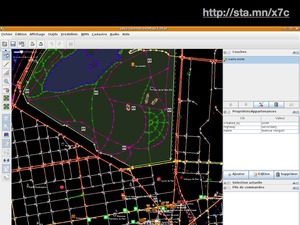

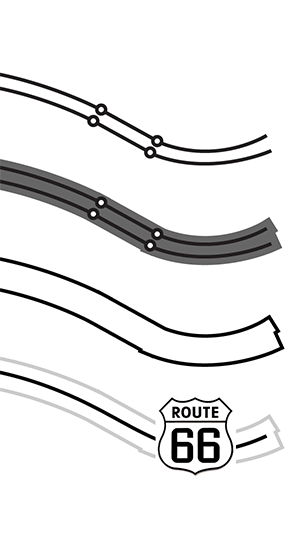

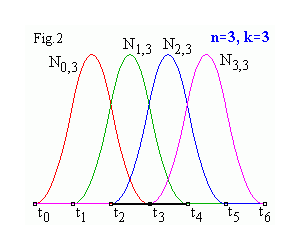

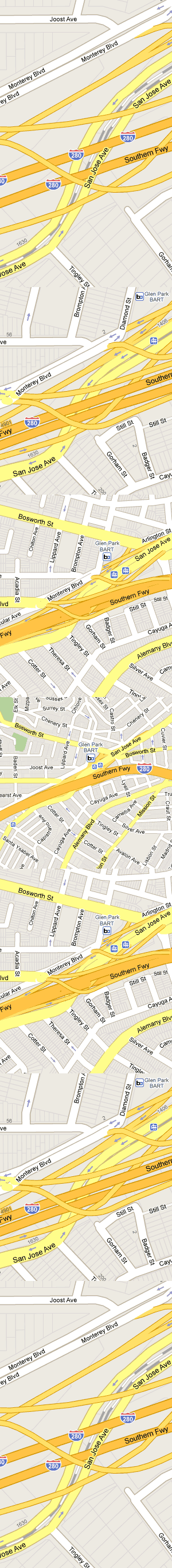

Anyway, this is a simplified illustration (from my State Of The Map U.S. conferences slides) that shows how the use of buffers, polygons, and the straight skeleton can be used to convert OpenStreetMap road data into improved, more easily-labeled lines:

As I mentioned in my NACIS keynote last year, OpenStreetMap has a fairly specific range of scales where it’s designed to work best, and if you need to create a lower-zoom map you will find that details like dual carriageways (large roads and freeways split into parallel strips) and interruptions for bridges or tunnels make it incredibly hard to generate good looking labels, not to mention the issue of publishing raw data in a useful or compressed form. While deriving the skeleton of a shape is perfect for this problem, there’s not a lot out there in the way of accessible implementations of the algorithm. OpenStreetMap has no current answer to the idea of derived, lower-resolution datasets outside the very low-resolution Natural Earth collection. OSM is historically biased toward manual production, and Steve Coast has advised me that setting up lower resolution OSM servers for manual tracing might be a more sensible way to handle this unmet need. I do agree, though computed geometries offer an excellent leg up to solve the cold start problem somewhat.

So, I blew through two days reading through implementation notes and doing a bit of coding, starting from Tom Kelly’s java implemetation Campskeleton. I’m not a java programmer, but Kelly’s straight skeleton index page offers plenty of notes on how to actually get the thing built. Most important are a few late-90s academic papers: Raising Roofs, Crashing Cycles, Playing Pool by David Eppstein and Jeff Erickson and especially Straight Skeleton Implementation by Petr Felkel and Štěpán Obdržálek, along with the cartography-specific approach from Using the Straight Skeleton for Generalisation in a Multiple Representation Environment.

Anyway, here’s where I’ve gotten to:

The easiest way to think of the straight skeleton is as a peaked roof on a building. Starting with the gutters of an arbitrarily-shaped building, you build up sloping roof until the bits all meet in the middle. The ridge of roof all the way around is the skeleton, and it gives a pretty good idea of the building’s axis, or a path it follows.

That angular blob might not look like a map, but imagine it as a curving road. Here’s what I’m aiming for, more generally:

The idea is similar in spirit to Paul Ramsey’s simplified Vancouver Island. There are a bunch of pitfalls in the process. Here’s one from before I figured out how to correctly order the priority list of roof line intersections, and didn’t check that points were actually inside the containing polygon:

Here’s an example showing an imaginary dual carriageway, buffered out to merge the shapes into a single polygon and then skeletonized down the middle:

The algorithm is incredibly sensitive to initial conditions, such as this example where a few extra parallel lines result in a double-peaked roof. It’s a plausible overhead view of a building, and a correct skeleton, but not quite the thing for cartography:

It’s also worth noting that I’m not doing anything to detect collisions just yet:

I’m getting close to done with this alley. The particular needs I’m aiming at are largely connected to the book-based bicycle map I described last time. It’s possible now to render excellent maps from OSM, but the medium of print makes small errors much more glaring, and I’m interested in fixing some of the loose ends of cartographic representation and OpenStreetMaps.

So, math shapes.

Dec 8, 2010 11:07pm

winter 2010 sabbatical: days four, five, six, and seven

It’s been almost a full week since my previous sabbatical update. Where was I? Oh right, maps. I’ve been making progress on two fronts: one is a series of updates to Walking Papers, the other is the a print project that currently lacks a name. The Wikileaks affair has also been completely engrossing, more on that below.

As I described last week, Walking Papers will have a multi-page atlas feature Real Soon Now, probably within a few days. I’ve been using the opportunity to improve the overall quality of the print and scan production process and also moved the whole site from its previous home on my overburdened Pair.com shared hosting account to a fresh new Linode instance. I’m also doing a fair bit of behind-the-scenes work on the scan decoding process, which I’m embarrassed to say has been riddled with problems from day one. The SIFT-driven corner-finding process has never been a major issue, but a lot of scans seem to fail because the QR Code reading library (Google’s Zxing) often can’t find a message in an image that’s nothing but crisp, isolated, beautiful code. I don’t know enough about the internals of Zxing to fix the problem there, but I can insert a manual step to allow people to simply type in the address contained in the code in the event of a read failure. Boring, but necessary. The other bit of sub-surface work I’m starting on is the ability to use a normal digital camera to read back the scans, hopefully with U.C. Berkeley’s Sarah Van Wart and prompted by Ushahidi’s Patrick Meier. Sarah has used the project to great effect with Richmond High School students and Patrick knows from firsthand experience how much of a pain it can be to find a scanner while everyone’s got cameraphones in their pockets.

The other print project is something I’m collaborating on with my friends Adam Schwarcz and Craig Mod, bicycle-enthusiast and book-maker respectively. It’s a long-time-in-the-works bicycle map of San Francisco and Oakland based on government data and OpenStreetMap, with a barcode-driven print/digital connection that we’re still brainstorming about. This was only my second exploration in the area of data-driven automatic print design when Adam first suggested the idea last year, but I’ve had enough experience with talking paper in the intervening time that I’m using my sabbatical to jump back into the project. Look for more on this in the next few weeks.

Alon Salant over at Carbonfive was gracious enough to offer a desk for a few days, and while working on these various projects I’ve also had a privileged view on what an agile workplace looks like. It’s been fascinating to observe from inside a development process that seems built around conversation, and while camped out at a loaner desk in their 2nd St. office I heard the beating heart of design and technical arguments that I’m more accustomed to experiencing via text.

At the moment I’m in Chicago, in what the bellhop (bellhop!) tells me is Al Capone’s old dentist’s office. Need to buy gloves, eat, and then head to the Art Institure where Walking Papers is part of the Hyperlinks exhibit.

Meanwhile, Uptown...

This week, I am helplessly transfixed by the Wikileaks story. There’s so much meat to this unfolding event, well-covered so far by Andy Baio’s Cablegate roundup. What’s really caught my interest has been the reactions of businesses like Amazon, Paypal, Mastercard, and Visa - all supposedly independent businesses suddenly acting in concert to isolate and marginalize a strange new actor. The timing of the response suggests that it wasn’t triggered by the release of the diplomatic cables at all, but rather by Julian Assange’s promise to release future documents related to the activity of an unnamed major bank. It’s like Naked Lunch, a moment when time grinds to a halt and you can see what’s sitting on everyone’s fork as they raise it from their plate. Hypothetical arguments about the likelihood of cloud data providers like Amazon or payment processors like Paypal cutting connections evaporate instantly: here is a clear-cut example of a inconvenient release of information scaring the shit out of someone enough to apply the screws.

Assange’s past writings about conspiracies and invisible governments painted a picture that was too large and too diffuse to elicit a local reaction. A conspiracy as subtle and far-reaching as that described by Assange might not be worth reacting to, because how can it be distinguished from simple alignment of interests? How can a private individual successfully act in response? Here, though, we see pieces of the whole suddenly illuminated as by a camera flash: milquetoast diplomatic chatter causes Amazon to suddenly decide that it cannot abide the hosting of unauthorized material. A strangely-timed sexual assault charge leads to an unprecedented Interpol warrant for Assange’s arrest. Senators and congressmen opine that if pursuing Wikileaks is difficult under the law we have, then perhaps we might look into changing the law?

The decision to leak a stream of diplomatic cables (as opposed to any one particular cable) is a sharp departure from typical journalistic approaches, which is really what I’m finding so engrossing in this story. Yesterday on the radio, a former general counsel of the C.I.A. complained that the released data lacked a “patina of journalism” or an editorial function (and therefore did not qualify for constitutional protection), which suggests to me that the government is now actually quite comfortable with the occasional fallout of the shocking revelations of journalism-as-usual. Even sustained evidence of state-run torture and of course that fucking war hasn’t led to the breadth and depth of reaction we’re seeing now: “All hands, fire as they bear.” Why now? The amusing dullness of the leaked cables shows that Wikileaks has decided to hunt upstream, taking aim at the metabolic processes of communication and secrecy. If there is in fact a conspiracy, and this is how it talks to itself, maybe we play a few games with its own internal communications to see what happens?

What’s currently happening is that all sorts of actors are responding in unexpected ways. Like a meadow crossed with underground gopher tunnels, Amazon’s reaction suggests that at least a few widely-separated individuals are in fact quite deeply connected and spooked enough to show themselves above ground in surprising places. Maybe a simple response is that I take our (four-figure per month) Amazon Web Services business to a competing cloud provider? Maybe another is that I route around Paypal in the future? On the other hand, how could I conceivably avoid Mastercard and Visa? Hopefully, Julian Assange is what he says he is: a spokesman and intentional fall-guy for a much larger group that can act without him.

Meanwhile, I’ve got my bowl of popcorn sitting here and it’s looking like a fascinating ride.

Dec 2, 2010 8:55am

winter 2010 sabbatical: days two and three

Two days have elapsed, all is well.

Yesterday I spent most of my morning dragging the six month-old “Atlas” feature of Walking Papers into a releasable state. It’s not quite there yet, but it’s significantly closer than the one I bashed together in a few days at a Camp Roberts exercise back in April. The general idea behind this feature is that the single-sheet bias of Walking Papers is a hindrance to people covering large areas, and more significantly it doesn’t help people who are delegating work. I continue to be surprised at the outcomes of this project - Eric is more focused on the tactile and aesthetic qualities of the prints themselves, while I’m interested in some of the social and organizational implications. It was always designed to be personal and utilitarian, but we’re finding that in a lot of ways it’s not quite those two things, not necessarily at the same time. Socially, the idea of multiple-page outputs opens the dynamic of tasking or assignment, which turns the sheet of paper into a communicating object. Why should it necessarily be the same person choosing the area, printing everything, handing out maps, noting down features, running them back and doing the scanning? Properly organized, all those activities can be parceled out and done more effectively for large areas.

An important thing that happened was the release of Bing imagery for tracing into OpenStreetMap, one of the most visible outcomes of Steve Coast’s new job over there. Also, the OSM Flash-based editor Potlatch 2 was finally released into the wild. Something really significant is happening with OpenStreetMap right now - it’s hitting all these critical communities and corporations and sparks and ripples are shooting out.

Today I spent most of the day catching up with Stamen alumni Ben Cerveny and Tom Carden, and working on a little thing we’re developing with Adam Greenfield and Nurri Kim over at Do Projects. It’s hiding in plain sight, I’ll talk more about it later when I’m more comfortable that we’re close to release.

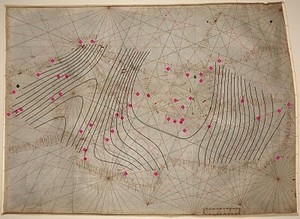

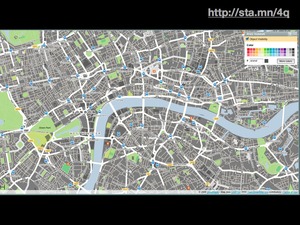

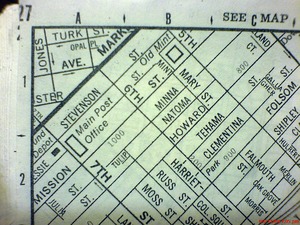

Also, mostly thanks to Aaron, I pinned a bunch of new cartography porn:

Nov 30, 2010 4:00am

winter 2020 sabbatical: day one

Today was the first full day of my planned winter sabbatical, and I'm mostly just getting used to the idea of having six weeks of open time in front of me. My plans so far are amorphous, but I know these things:

- I have a pile of small-to-medium-sized experimental, research, and development work I've been anxious to think about. Most of it is in some way connected to geography or cartography, right now I'm arranging pieces to figure out how they might fit together.

- I'll be visiting people. In January, Matt Ericson at the New York Times has graciously offered the use of a desk for a week. Until then, I've got a few other multiple-day visits I'm arranging with other folks I know. Partially I'm looking to vary my surroundings, but I'm also interesting in being around people whose work I like to get a sense for how they get it done. I'm thinking of simple things here: furniture layout, how close people are to one another, do they move around a lot or use headphones, is it chatty and friendly?

- My daily schedule will be broken up into chunks for making/doing (mornings, evenings) and chunks for talking/moving (afternoons).

- I haven't gone to the gym or ridden my bike in a very long time.

Today, I fixed the way that Reblog was talking to Delicious and Twitter, so that my public Twitter feed, Delicious account and links feed could all be synchronized again. I used to publish a regular stream of links, but stopped around the time that Twitter upgraded to OAuth and everything broke. It made me feel mute and disconnected, not having access to my normal means of publishing tiny things. Pinterest helped somewhat. I also pushed a new version of TileStache, focused on the vector tiling needs of Polymaps. See this GIS Stack Exchange thread for a bit of context. Then I had a steaming bowl of amazing Pho from Ba Le in downtown Oakland, walked the dog, and caught up on some reading courtesy of Aaron.

Also, I found these 3" x 4" post-it labels that fit perfectly on my wrist rest for notes:

Nov 3, 2010 6:03am

election report

This election, I decided to try something different and volunteered as a poll worker. I was given the role of “standby judge”, and late yesterday the Registrar’s office called to say that my help was needed as an Inspector at a downtown Oakland polling location whose regular Inspector was sick. Having had a three-hour class that taught me all about the voting process this year and otherwise no relevant experience, all I knew was that I had been put in charge of a polling place where I was to spend a 15-hour Tuesday supervising the primary point of democratic feedback.

Things I learned today:

- You can seriously never have enough pens. Our critical path today was pen availability: the R.O.V. gives each precinct a certain number, and then a bunch of people show up and that number runs out. We tried to buy more in the middle of the day, but there wasn’t a nearby store that sold ballpoints in bulk. Next time I’m bringing a bunch of bics and keeping them secret until the evening voter rush, at which point I will quietly disperse them into the penstream.

- People find it surprising when you greet them at the polls by saying “I know you from Twitter” (hello, Mitch).

- Election technology, at least in Alameda County, is basically Peak Papernet*. Every piece of the system works together and the County seems to run their elections by Slashdot standards: there are voter-verifiable paper audit trails for the touchscreen, most voting happens by filling in a little broken arrow icon with pen and paper, every sheet and envelope includes a unique identifier with a tear-offable receipt, and every box and bin comes with tamper-evident stickers. Every piece of the process, including the end-of-day shutdown procedure, has built-in safeguards that use simple counting and sorting procedures to ensure that each ballot is accounted for in some numbered ziploc bag: counted, provisional, unused, spoiled, etc.

- One effective way to ingratiate yourself with a group of people you’ve only just met is to spend $25 at Whole Foods on a bag of gift snacks. Cookies and fruit seemed effective, including the vegan ones that come in a paper bag and everyone goes “ew, vegan” and then they try them and holy shit.

- I am basically happy to cheerfully yell the same thing all day long, to an unending stream of new voters. I’ve always enjoyed the security lines at airports where someone in a sharp suit yells at everyone to keep moving, so I tried to do the same thing here. People in groups faced with bureaucratic procedure become cattle, and need to be helped along not because they don’t know what to do but because everyone in line needs to know what everyone else knows to do. “Keep moving”, “wait here for five minutes while the floor clears up”, “give this envelope to the man in the hat”, and “Hello what’s your last name?” are most of the words that came out of my mouth today.

- A system must include provisions for constant forgiveness.

I was sad to see Prop 19 go south and I still haven’t had the heart the check if I was successful at kicking the BART Board to the curb, but otherwise it was one of the most exciting and exhilarating ways I can imagine to spend a weekday and make $100 in the process. I would totally do this again, and I recommend the experience to pretty much anyone.

Oct 20, 2010 6:00am

winterbound

I'm relieved that it's winter. I've just returned from Australia, where it's spring and beautiful and pointed at the southern stars. I was the second day's keynote speaker at the ever-excellent Web Directions (South) conference, and I visited Mitchell Whitelaw at the University at Canberra, and before that I was in Denver for Planningness, and before that Mark Hansen invited me to do the first statistics seminar lecture of the year at UCLA. We've just dodged a genuinely-interesting but still bullet-shaped project at Stamen and things are looking to settle into a welcome groove. I'm researching simulated annealing for map label placement and thinking about some upcoming projects and just generally glad to have organized a sort of loose sabbatical for the period starting with Thanksgiving and ending a little ways into 2011.

Sep 27, 2010 5:30am

map sprint

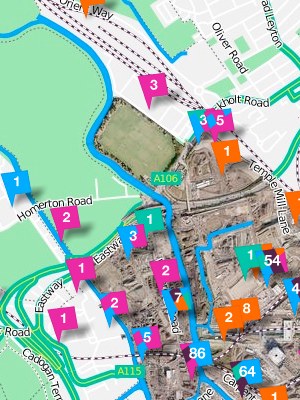

This weekend marked the first global Mapnik Code Sprint. Most attendees converged on Cloudmade's London office, while Nino Walker and I held down the fort in San Francisco and Robert Coup dialed in from New Zealand.

Having just spent about two days thinking deeply about relative path resolution in Mapnik side-car Cascadenik, I'm fairly happy with where the code base has arrived. My goal with all of this stuff - introducing web cartography to CSS, building on Schuyler and Chris's work in tile rendering, and trying to make it easier to run your own map server - has always been to take an activity that's already fun and interesting, and make it easy, fun and interesting. Maps on the internet should not be difficult to publish or overly dependent on single providers like Google, and it's the details in all the bits of glue that make this possible.

For my part, I agreed with Dane to focus on correctness in Cascadenik's output. I put the code away for a while and when I came back a little while ago, it was obvious that a lot of old, bad decisions I had made were in need of some fixing. It's important for good, small tools to behave in predictable ways and I've been taking a lot of cues from what I consider to be prescient, amazing design decisions in HTML and CSS and doing my best to apply them to portable and easy web cartographic stylesheets.

The changes we made fall into three broadish areas:

- Cascadenik now knows more about paths, so if you ask it to create a stylesheet for Mapnik and put it in some directory, it'll try to be just a little bit smarter about how it names things based on where they are and where they came from.

- Cascadenik also has a lot less options when compiling, which I think is a good thing. Dane had to update many of my early, now-wrong assumptions with a bunch of patches that added new optional behavior flags, and I used the weekend to change those underlying assumptions so there didn't need to be so many flags.

- Nino introduced an incredibly cool new way to manage data sources which I think is going to make working with data a lot more palatable.

This isn't quite the place for all the technical details, but I think it's worth repeating that the reason for all this work and effort is to open a certain kind of activity to new groups of people. Dane became an honest-to-god C++ programmer through his exposure to Mapnik and I think that makes him a saint or a hero or both, but it shouldn't be necessary for ordinary users to make this same transition. Rather, it should be normal that people can approach maps and cartography and do interesting things with them, things different from the usual "pizza places in city X" use case offered by the Googles of the world. Like, I've got this wallet that Gem made for me (out of indestructible tyvek!) and these bad-ass shoes that I designed on Zazzle.com:

This is a synthetic preview image that Zazzle showed me when I was posting my renders of the Oakland Assessor's Parcels shapefile:

Pretty close right?

Anyway, most of what I've been doing for the past few years in these occasional experiments and releases has been an attempt to shrink problems, so that activities which might otherwise require substantial effort or time or money or people can require less of all those things, raising the RPE's as Kellan might put it.

I'll see what I can do about making the shoes publicly buyable.

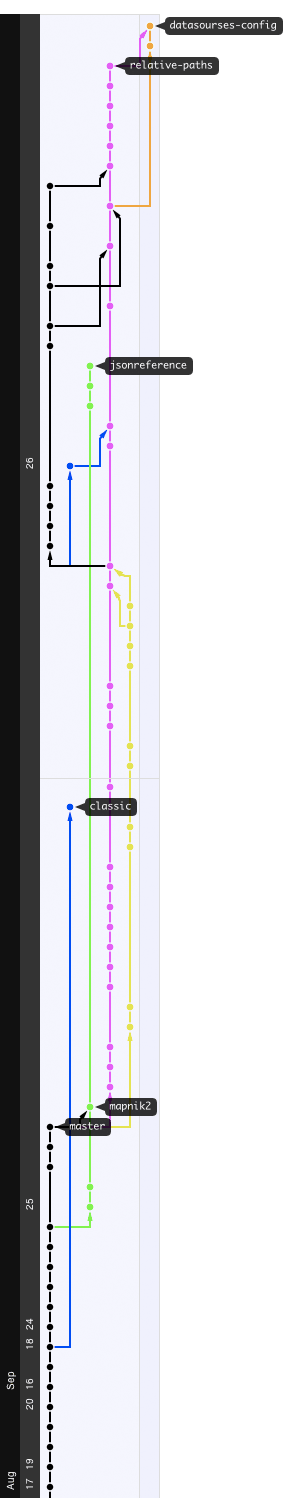

Also, here's that awesome thing Github does where they show you everybody's changes in a long horizontal graph:

Aug 21, 2010 6:42pm

release often

A few code-like things I've been working on lately.

Polymaps

Polymaps is a result of our summerlong collaboration with SimpleGeo. We've been working on it for some time, but yesterday we announced it for realsies and saw an amazing response from all over the internet. This one's been doubly rewarding for us, since it's also the result of a summerlong collaboration with Mike Bostock of Protovis fame. Mike's been on our radar since he showed off Protovis when Tom and I visited Stanford a while back. It was a week-old at the time and already full of promise. This Javascript thing, I think it will go far.

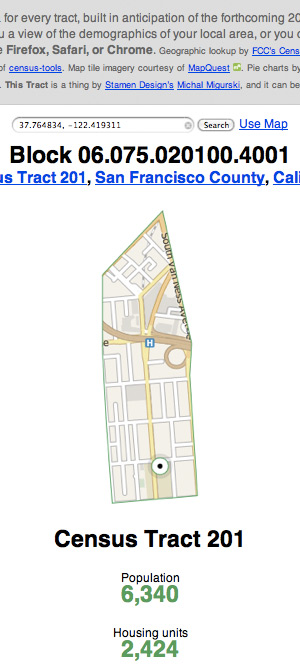

Census Tools

Census Tools is a small thing I put together last week, to extract data from the 2000 U.S. Census by subject and geography. I've just added the ludicrously detailed Summary File 3 with loads of information on housing, commutes, and other topics. Also, Shawn added a second script, text2geojson.py, which converts the textual output of census2text.py into neatly-formed GeoJSON. This makes it trivially compatible with PolyMaps!

Walking Papers

Walking Papers gained two new translations recently. Maxim Dubinin provided a complete version of the site in Russian, while Frank Eriksson has been working on it in Swedish. This will bring our total number of languages to ten, and it's a fascinating case study in the power of using Git (and Github) for open source projects. Generally speaking, most of the translators haven't had to ask for permission or even informed me of their work until they were basically done. A staging site and a few git pulls later and we've got a new translation!

Maxim who did the Russian version was the first person to translate the title of the project in addition to the text content. He said they had a pretty good pun going as well:

Well, as I recall, "walking papers" are docs you're getting when you're fired, right? Turns out "Обходной лист" is exactly the same thing in russian - a piece of paper ("лист"), that you're getting when you're fired and use to "walk around people" ("обходить") in your org collecting signatures that you returned what you had too etc, so it is means almost exactly the same. Funny that that you can literally translate some idioms and they will still make sense. In the context of OSM I guess it translates as well you walk around, now geographically, not people wise and it is a piece of paper :)

So good.

Aug 17, 2010 5:21pm

presenting tilestache

Named in the spirit of the pun-driven life, TileStache is a response to a few years of working with tile-based map geographic data and cartography, and an answer to certain limitations I've encountered in MetaCarta's venerable TileCache.

The edges I've bumped into might be esoteric, but I think they're also indicative of our many experiments in tile-based web mapping since 2007. The core functional needs of a tiling system are well handled by existing software: imagery from bitmap sources of aerial and scanned imagery, Mapnik renderings of OpenStreetMap data, and caches of remote WMS tiles. None of this is really the core point of TileStache, though it's all certainly table stakes.

The place where I've found a need for a new project is somewhere in the intersection between synthetic imagery, composites of existing imagery, and delivery of raw vector data to browsers. More and more we're dealing with the expressive possibilities of new web cartography in project like Pretty Maps, and TileStache is a possible approach to data publishing that borrows a lot of the simplicity of TileCache while adding a dose of designed-in extensibility for creating new kinds of maps.

Isotiles

After developing Travel Time Maps with MySociety in 2008, we adapted our bitmap data imagery technique to tiled delivery. The follow-on Mapumental project hypothetically covers the entirety of the UK with dynamic, temporal data.

Here's a screenshot from one of the early demos, showing travel times around a city, lit up over the coastline:

It's not animated (check the Channel4 site for a video of Mapumental), but this is one of the constituent map tiles underlying the image:

Each pixel in this tile is a 24-bit value encoded in the red, green, and blue channels, expressing a time and speedily decoded by the Flash application in the browser. This part of the project is driven by a custom Layer class in TileCache, that pulls pre-computed time points (e.g. transit stations) from a database and renders cones around them. Some of the code might be findable in MySociety's source repository.

What's interesting here is the idea of completely synthetic providers, i.e. those not directly based on GDAL sources, Mapnik renderings, or WMS servers. It's something I'm demonstrating in the TileStache Grid provider, an implementation of the UTM grid (U.S. National Grid and Military Grid Reference System) for overlay onto other spherical mercator maps.

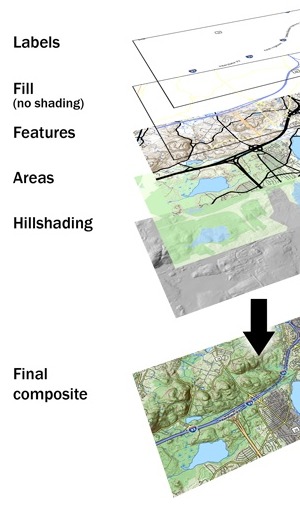

Composites

Lars Ahlzen's TopOSM is a longtime rendering project based on OpenStreetMap data and cartography built from constituent pieces of Mapnik. TopOSM combines renderings of streets, hills, and labels to create a beautiful, dimensional result:

Lars builds the final map up from a stack of images, many of which might themselves be expressed as tile layers:

In attempting to build a new Layer class for TileCache that expresses this idea, I found that it seemed to be impossible to access the full configuration of the system from within a given layer. There was no way to create a derived map sandwich, and I knew that Lars's own method was a homebrew of ImageMagick and similar tools. I'm interested in something a bit more systematic that implements something like Photoshop layers for cartography. The current Composite provider in TileStache provides layers, alpha channels, color fills and masks, and I'd like to implement transfer modes (e.g. Photoshop's hard light) if this sample proves to be interesting.

We've delivered this sort of composite cartography to clients in the past, but always through a combination of spit and chewing gum.

GeoJSON Data

Most recently, we've been developing Polymaps, an SVG-based map engine that can show regular image tiles in combination with vector overlays driven by GeoJSON data. Tiles turn out to be just as helpful for publishing and requesting vector data as they are for pixel-based images. We've modified TileCache to support this use in the past, but there are simply too many places where the code assumes pixel-based images for the exercise to be anything but frustrating. TileStache is designed to accommodate data-only tiles, including an example PostGeoJSON provider that converts PostGIS data to GeoJSON.

As the ability of browsers to interpret and display a wider variety of imagery improves, we're going to see this data tile concept become increasingly useful. Why stop at image tiles, when you might want to render roads that can be rolled-over or clicked directly? Why assume dynamic data services, when TMS-style tile URLs (e.g. */12/656/1582.png) can be hosted from simple storage services or plain filesystems?

Anyway.

It's early days, but we're finding that the limitations around in-browser display of layers and data are increasingly down to the display of SVG or Canvas rather than any particular native slowness in Javascript itself, so we're thinking that our still-fairly-intensive experimental demos getting a few kinds words from friends like Nathan, Alyssa, and Jen will calmly scroll into the window of normalcy within the next year or so. We also know that other developers are thinking about some of the same concerns that the motivating goodies above address. For example, Dane tells me that the current bleeding edge of the map-rendering library Mapnik includes basic image compositing, masking, and GeoJSON output right there in the core.

Really what we're looking at is a future filled with work like Brett Camper's amazing 8-Bit Cities, "an attempt to make the city feel foreign yet familiar ... to evoke the same urge for exploration, abstract sense of scale, and perhaps most importantly unbounded excitement."

What are the tools that help make this possible?

Aug 12, 2010 5:30am

census-tools

The U.S. Census publishes an astonishing volume of data, notably with the most recent 2000 count. The demographic data contained in each of the summary files is precise, detailed, and distributed in a difficult-to-understand text format. The documentation for summary file #1 alone (race, age, sex) is a 637 page PDF file, and the actual data is stored in a maze of zip files all alike.

I've poked at these before, but I recently got a bee in my bonnet about making them available in a more useful form so they could be mapped. I talked to Josh Livni (of Land Summary) quite a while back about his plans for a demographic summary site that would store everything in a database in the cloud. Then Amazon made it available as a public dataset. Still I was not satisfied - both approaches to handling the data seemed a bit ocean-boiling in retrospect.

I've been experimenting with something I'm tentatively calling census-tools that seeks to make this data a bit more accessible. I'm motivated by the idea that predictably-structured zip files stored on a web server and accessed with Python's excellent stream-handling libraries might actually be considered quite a good API, so the first tool in the repository proceeds from there. It does a very simple thing: given an optional U.S. state, a geographic summary level (e.g. census tract or county), and a type of data, it unzips those remote files into memory and converts them to a tab-separated values file.

Here's an example:

python census2text.py ––verbose ––wide ––state=Hawaii ––geography=county ––table=P18 ––output=hawaii-households.txt

It outputs a chatty text file of household data for every county in Hawaii into a file called hawaii-households.txt. It takes about a minute to churn through a 2.8MB zip file and output the results. Omitting the state name gets you every county in the U.S. in about 20 minutes:

python census2text.py ––verbose ––wide ––geography=county ––table=P18 ––output=national-households.txt

I tested with Hawaii because it's small, and immediately discovered the strangely underpopulated Kalawao County:

The county is coextensive with the Kalaupapa National Historical Park, and encompasses the Kalaupapa Settlement where the Kingdom of Hawai'i, the territory, and the state once exiled persons suffering from leprosy (Hansen's disease) beginning in the 1860s. The quarantine policy was lifted in 1969, after the disease became treatable on an outpatient basis and could be rendered non-contagious. However, many of the resident patients chose to remain, and the state has promised they can stay there for the rest of their lives. No new patients, or other permanent residents, are admitted. Visitors are only permitted as part of officially sanctioned tours. State law prohibits anyone under the age of 16 from visiting or living there.

Fascinating.

Anyway, this small amount of information can be quite hard to get to. Between the impenetrable formatting of the geographic record files, the bewildering array of different kinds of geographic entities, and the depth of geographic minutiae, it can take quite a bit of head-scratching to extract even the first bits of information from the U.S. Census.

I hope this first tool makes it a little bit less of a hassle. I'd accept whatever patches people choose to offer: support for summary files beyond SF1, additional geograph summary levels, general patches, and more.

Aug 5, 2010 7:05am

stress conditions

I'm at Camp Roberts again for a few days, working on Walking Papers with friends from STAR-TIDES, FortiusOne, Google, and Gonzo Earth on open source, geographic crisis response technology. Being in a military environment working on responses to high-speed disaster has me thinking about stress and preparedness. Two excellent magazine articles on the subject crossed my path recently, both forming a cohesive view on the privilege of living without stress. Privilege is driving a smooth road and not even knowing it, and access to that road is contested. Some are born on it, some never reach it, some resent its existence, and some can't shake the memory of the ditch alongside.

Packing Heat, "conditions of readiness," and the gun lobby:

Contempt for Condition White unifies the gun-carrying community almost as much as does fealty to the Second Amendment. "When you're in Condition White you're a sheep," one of my Boulder instructors told us. "You're a victim." The American Tactical Shooting Association says the only time to be in Condition White is "when in your own home, with the doors locked, the alarm system on, and your dog at your feet ... the instant you leave your home, you escalate one level, to Condition Yellow." A citizen in Condition White is as useless as an unarmed citizen, not only a political cipher but a moral dud. ... Having carried a gun full-time for several months now, I can attest that there's no way to lapse into Condition White when armed. ... Condition White may make us sheep, but it's also where art happens. It's where we daydream, reminisce, and hear music in our heads. (Dan Baum in Harpers, sorry for the paywall)

Under Pressure, chemistry, and health/stress feedback loops:

The deadliest diseases of the 21st century are those in which damage accumulates steadily over time. (Sapolsky refers to this as the "luxury of slowly falling apart.") Unfortunately, this is precisely the sort of damage that’s exacerbated by emotional stress. ... One of the most tragic aspects of the stress response is the way it gets hardwired at a young age - an early setback can permanently alter the way we deal with future stressors. The biological logic of this system is impeccable: If the world is a rough and scary place, then the brain assumes it should invest more in our stress machinery, which will make us extremely wary and alert. There's also a positive feedback loop at work, so that chronic stress actually makes us more sensitive to the effects of stress. (Jonah Lehrer in Wired)

Jul 31, 2010 11:16pm

state of the map U.S.

The first domestic edition of the annual OpenStreetMap State Of The Map conference is in a few weeks, and I'll be there in Atlanta along with all the funky geography enthusiasts.

Jun 17, 2010 7:02am

blog all kindle-clipped locations: the big short

I picked up Michael Lewis's new book, The Big Short, pretty much as soon as it hit the Kindle a few weeks ago. It's a post-catastrophe account of the subprime mortgage crisis, told through the eyes of a small group of traders who shorted the supposedly unshortable mortgage backed securities that made everyone rich five years ago.

It's partially a financial story, but to me it's also a story about assumptions. I've been thinking a bit about the effects of unspoken, day-to-day dependencies that we all rely on in our lives. Can we live without them? Are we light enough on our feet to adjust when they shift? Do we even know what they are, and can we explain how they fit together? In a small company like mine, these questions can lead to some fairly serious existential crises. A few years ago, our client base seemed disproportionately tied to the galloping Web 2.0 economy. More recently, the brash announcement by Apple that Flash would be unsupported on the iPad confirmed a long-held suspicion that the platform was on rocky ground. In the trenches of my day-to-day as technology director, I've become excessively sensitive to the problems of cross-dependencies among projects, code bases, and servers. This is not so much an issue of identifying single points of failure as it is a matter of understanding which doorknobs you've tied your teeth to and subsequently forgotten.

Three questions:

- Do you depend on anything outside your control? What is it?

- Can you repeat past successes with those same externalities?

- Could you quarantine, isolate, or replace them, if you had to?

The Big Short is the story of one particular set of external dependencies that turned out to be hopelessly intertwined. Specifically, it's about the revelation that an entire class of financial products based on the performance of mortgage payments was more deeply interdependent and market-distorting than anyone had imagined. It's the moment near the end of a Stephen King novel where all the townspeople are revealed to have first names that start with "K" and they're sitting silently in their cars waiting for you up the road. NPR's Planet Money does a better job of explaining the details ("we bought the toxic asset..."), but the underpinning of the story shows how difficult it is to reject a lie when your livelihood depends on believing it.

Anyway.

Loc. 476-82, an opening anecdote showing the matter-of-fact cultural role of Wall Street greed:

When a Wall Street firm helped him to get into a trade that seemed perfect in every way, he asked the salesman, "I appreciate this, but I just want to know one thing: How are you going to fuck me?" Heh-heh-heh, c'mon, we'd never do that, the trader started to say, but Danny, though perfectly polite, was insistent. We both know that unadulterated good things like this trade don't just happen between little hedge funds and big Wall Street firms. I'll do it, but only after you explain to me how you are going to fuck me. And the salesman explained how he was going to fuck him. And Danny did the trade.

Loc. 483-87, Steven Eisman is one of the main characters, a brusque gadfly with odd listening habits:

Working for Eisman, you never felt you were working for Eisman. He'd teach you but he wouldn't supervise you. Eisman also put a fine point on the absurdity they saw everywhere around them. "Steve's fun to take to any Wall Street meeting," said Vinny. "Because he'll say 'explain that to me' thirty different times. Or 'could you explain that more, in English?' Because once you do that, there's a few things you learn. For a start, you figure out if they even know what they're talking about. And a lot of times they don't!"

Loc. 985-89, on the undesireability of defending an idea:

Inadvertently, he'd opened up a debate with his own investors, which he counted among his least favorite activities. "I hated discussing ideas with investors," he said, "because I then become a Defender of the Idea, and that influences your thought process." Once you became an idea's defender you had a harder time changing your mind about it. He had no choice: Among the people who gave him money there was pretty obviously a built-in skepticism of so-called macro thinking.

Loc. 1788-96, on the role of research that seemingly no one else wants to do. This is actually one of the most interesting aspects of The Big Short for me, the relative rarity of legwork compared to the ease of sticking to first appearances:

It wasn't a question two thirty-something would-be professional investors in Berkeley, California, with $110,000 in a Schwab account should feel it was their business to answer. But they did. They went hunting for people who had gone to college with Capital One's CEO, Richard Fairbank, and collected character references. Jamie paged through the Capital One 10-K filing in search of someone inside the company he might plausibly ask to meet. "If we had asked to meet with the CEO, we wouldn't have gotten to see him," explained Charlie. Finally they came upon a lower-ranking guy named Peter Schnall, who happened to be the vice-president in charge of the subprime portfolio. "I got the impression they were like, 'Who calls and asks for Peter Schnall?'" said Charlie. "Because when we asked to talk to him they were like, 'Why not?'" They introduced themselves gravely as Cornwall Capital Management but refrained from mentioning what, exactly, Cornwall Capital Management was. "It's funny," says Jamie. "People don't feel comfortable asking how much money you have, and so you don't have to tell them."

Loc. 1830-34, on arguing convincingly:

Both had trouble generating conviction of their own but no trouble at all reacting to what they viewed as the false conviction of others. Each time they came upon a tantalizing long shot, one of them set to work on making the case for it, in an elaborate presentation, complete with PowerPoint slides. They didn't actually have anyone to whom they might give a presentation. They created them only to hear how plausible they sounded when pitched to each other. They entered markets only because they thought something dramatic might be about to happen in them, on which they could make a small bet with long odds that might pay off in a big way.

Loc. 2206-11, more on Eisman's listening habits:

Eisman had a curious way of listening; he didn't so much listen to what you were saying as subcontract to some remote region of his brain the task of deciding whether whatever you were saying was worth listening to, while his mind went off to play on its own. As a result, he never actually heard what you said to him the first time you said it. If his mental subcontractor detected a level of interest in what you had just said, it radioed a signal to the mother ship, which then wheeled around with the most intense focus. "Say that again," he'd say. And you would! Because now Eisman was so obviously listening to you, and, as he listened so selectively, you felt flattered.

Loc. 3260-64, on $1.2 billion:

In early July, Morgan Stanley received its first wake-up call. It came from Greg Lippmann and his bosses at Deutsche Bank, who, in a conference call, told Howie Hubler and his bosses that the $4 billion in credit default swaps Hubler had sold Deutsche Bank's CDO desk six months earlier had moved in Deutsche Bank's favor. Could Morgan Stanley please wire $1.2 billion to Deutsche Bank by the end of the day? Or, as Lippmann actually put it - according to someone who heard the exchange - Dude, you owe us one point two billion.

Loc. 3413-22, on eight days of chlorine for all of Chicago:

His wife's extended English family of course wondered where he had been, and he tried to explain. He thought what was happening was critically important. The banking system was insolvent, he assumed, and that implied some grave upheaval. When banking stops, credit stops, and when credit stops, trade stops, and when trade stops - well, the city of Chicago had only eight days of chlorine on hand for its water supply. Hospitals ran out of medicine. The entire modern world was premised on the ability to buy now and pay later. "I'd come home at midnight and try to talk to my brother-in-law about our children's future," said Ben. "I asked everyone in the house to make sure their accounts at HSBC were insured. I told them to keep some cash on hand, as we might face some disruptions. But it was hard to explain." How do you explain to an innocent citizen of the free world the importance of a credit default swap on a double-A tranche of a subprime-backed collateralized debt obligation? He tried, but his English in-laws just looked at him strangely. They understood that someone else had just lost a great deal of money and Ben had just made a great deal of money, but never got much past that. "I can't really talk to them about it," he says. "They're English."

Loc. 3747-52, on being dumb and looking for grownups:

The big Wall Street firms, seemingly so shrewd and self-interested, had somehow become the dumb money. The people who ran them did not understand their own businesses, and their regulators obviously knew even less. Charlie and Jamie had always sort of assumed that there was some grown-up in charge of the financial system whom they had never met; now, they saw there was not. "We were never inside the belly of the beast," said Charlie. "We saw the bodies being carried out. But we were never inside." A Bloomberg News headline that caught Jamie's eye, and stuck in his mind: "Senate Majority Leader on Crisis: No One Knows What to Do."

Loc. 3880-82, a last word on dependencies:

The changes were camouflage. They helped to distract outsiders from the truly profane event: the growing misalignment of interests between the people who trafficked in financial risk and the wider culture. The surface rippled, but down below, in the depths, the bonus pool remained undisturbed.

Jun 16, 2010 7:25am

clipper futures

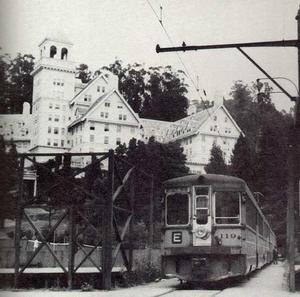

On June 16th 2010, the Bay Area Metropolitan Transportation Commission released Clipper, a rebranding of the original Translink payment card for public transportation. In the ensuing five years, Clipper has overtaken other forms of fare and payment to become the only remaining acceptable method of beeping a ride on Bay Area public transit. Starting with San Francisco's Muni and Alameda County Transit, later expanding to BART, Santa Clara Valley, San Mateo's SamTrans, Golden Gate Transit, Yellow Cab, Veterans Cab Co., and most recently the full complement of toll bridges including the Golden Gate, Bay Bridge, Richmond and San Mateo bridges thanks to Caltrans. Clipper is everywhere around the Bay.

It's difficult to remember now that just half a decade ago, local transit was a confusing jumble of mismatched schedules and fares. Riders no longer restrict themselves to monthly passes from a single agency, choosing instead to hop from one mode to another as their needs emerge. Unified payment makes most of this activity possible: the Clipper card has grown in importance along with the government ID and credit card. Initially envisioned as a purely a multi-agency payment card, Clipper has long since erased the functional distinctions among agencies thanks to the smooth thoughtlessness of synchronizing payments on all sides of the Bay.

Similar to the historical unification of competing streetcar companies under the umbrella of city-operated transportation authorities, we expect that late next year or possibly early 2017, the MTC Commissioners will retire the independent identities of SF Muni, AC Transit, Santa Clara VTA and SamTrans to be replaced by a unified "Bay Area Clipper" name. Most local observers view this as a pure formality, though it's expected that in keeping with its historic reluctance to participate in inter-agency plans, the BART Board will delay participation in the new name until 2018 at the earliest. They'll come around eventually.

Clipper has also managed to tap into a full range of data streams connected to urban transit, making them more interesting and valuable along the way. The fluidity and ease of motion we enjoy now is also made possible through the nearly ubiquitous availability up-to-the-minute locations for participating vehicles, made available from the agencies themselves through a public stream of real-time updates accessible to programmers and normal users alike. Buses and trains are almost universally equipped with location-tracking devices based on GPS and wireless signals, and a suite of applications from commercial, open-source, and philanthropic developers build on the predictive, route-finding data services published for every vehicle in the system. This has helped ease the pain of occasional budget cuts and service disruptions, giving users a way to minimize the amount of time they waste waiting around for their next ride.

Interestingly, the commercial providers of wi-fi, cell tower, and other "RF beacon" geolocation services have transitioned to something more like a regulated utility model. Originally established up to provide location lookup services for smartphones near the end of the 00's, the largest of these providers recently folded their own billing for Bay Area users into the Clipper system. The number of lookups you do for your physical location or finding an arrival time for a bus are simply charged to your card. Many users never even see these charges, since a large number of employers pick up the tab for employee accounts on a pre-tax basis.

More recently, concerns have surfaced around the privacy of data tracked through the Clipper servers. People are understandably jittery, after the numerous social networking data breach debacles of three years ago that seemingly turned a generation off of oversharing. MTC have gone to great pains to assure users of the system that their data is safe from "getting zucked", and they've begun to provide free personal monitoring services to users of Clipper. It's now possible to access to a complete, up-to-the-minute stream of your own card usage (including the geographic location of each beep) along with a record of access requests to that same data by parents, friends, mobile apps, credit reporting firms, or government agencies monitoring transportation use for oil-credit tax breaks. If someone's peeking at your transit history, you're the first one to know.

As Clipper begins its fifth year, we're seeing movement toward expanding the card into other uses around the Bay Area. Loose legal definitions of "transportation spending" have bike and rollerblade shops lobbying to allow repairs and maintenance to be charged to a Clipper account. The financial district congestion zone has announced a feasibility study for ditching its proprietary payment structure for Clipper. Even hardware companies have started to retool their smartchip-based door locks to optionally work with the system, bringing the card right into private homes. It seems too obvious to mention and too pervasive to notice, but seemingly everywhere you turn the one-time bus payment card has turned into a key to complete mobility and total access.

Let's try and not screw this one up.

Jun 10, 2010 7:05am

swishy curves, where you want them

There's a short series of posts in here, but I'm out of practice with blogging so I'll start with just this.

I've exhumed some of last year's thinking on heat maps, and re-encountered Zach and Andy's excellent series of posts on geographic isolines. Zach Johnson posted some ideas on a quick-and-dirty method for generating isolines from a field of point measurements, using the Delaunay triangulation (which I've mentioned before). Andy Woodruff followed up with ideas about curves, specifically the problem of passing smooth curves through a set of points.

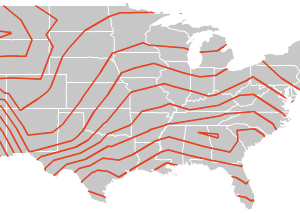

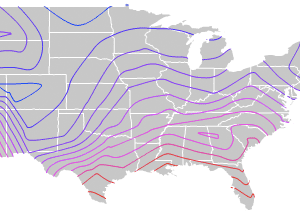

The general idea is to go from this:

...to this:

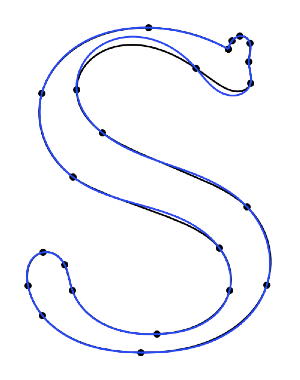

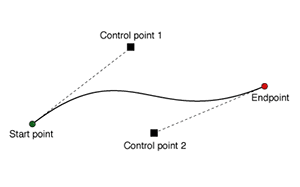

...using a process somewhat like this:

("S" from Raph Levien)

Anyway. Splines are pretty much a solved problem, in the sense that your typical graphics library is going to support at least cubic splines - Flash, SVG, etc. all have native methods for making smooth curves between two endpoints with two additional control points. If you've used Adobe Illustrator at any time in the past 23 years, you'll know how this works:

Andy writes about the generation of curves that are smooth, yet guaranteed to pass through a full set of points without appearing discontinuous. What makes this difficult is that there are an infinite number of solutions, generally differentiated by the amount of tension or distance along connections between pairs of points. It's also difficult to express these solutions using control points; where do you place them? Often you need to make assumptions about the correct slope through a given point, and often those assumptions lead to some weird-looking results.

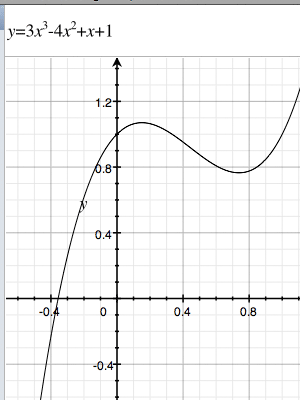

With a bit of basic algebra it's possible to deconstruct these smooth curves into a set of parametric equations. The trick is to introduce a new independent variable, t, which represents progress along the curve through a third dimension. T is for time, because it makes sense to think about x and y in the image plane varying through time. You can find a smooth curve through any set of points - a straight line through two points, a quadratic curve through three points, a cubic curve through four points, etc. I'm stopping at four points and the cubic curve, it looks good and is easy to calculate.

Here's one such 2D cubic curve:

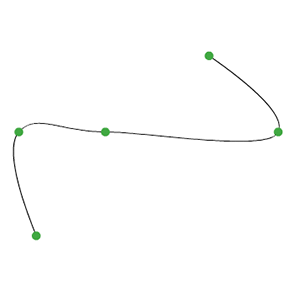

It might look familiar if you've ever used a TI-81. The twisty math bit here is that given a set of any four arbitrary points, it's possible to generate one such curve that passes through them all. The second twisty math bit is that you can do this for the x and y components of the curve separately, creating two separate functions over t that move through space and describe a curve. Wikipedia has an excellent article on systems of linear equations, though I avoided most of the tedious algebra by using Sympy, a symbolic math library for Python that does the work. This is the resulting function, and here is an animation showing eight separate cubic functions over a set of eight arbitrary points:

Here's the same image showing all the functions overlaid on top of one another, with the "closest" central one highlighted in turn:

This is the third twisty math bit. To create a single, smooth curve out of all these pieces, you adjust the relative influence of each one over its length using a basis function. They're described in excruciating detail at ibiblio.org, where you can scroll down that page to play with some interactive java applets. I've cheated, and used sine waves that still look something like this over the length of the curve:

Putting it all together, you get this transition from simple cubic curves to a complete, smooth system that closes a full set of points:

The code for all this is a bit of a hairball (also syntax-highighted), but I hope potentially useful.

One reason I find this method interesting is that the end product is actually not a curve at all, but a series of points joined by straight line segments. You provide the illusion of a curve by make lots of them, and very short. This means that the resulting "curves" are trivially compatible with geographic software like PostGIS or Mapnik, and therefore possible to simplify using tools like Mapshaper, distribute in formats like Shapefile, and render with plain old Tilecache. At the cost of additional file size, you are freed from Flash and dumped wide-eyed and blinking in the world of actual geography.

Apr 16, 2010 5:52am

blog all kindle-clipped locations part III

Axis Maps cartographer Zachary Forest Johnson wrote this loving essay-length biography of William Bunge, radical geographer. I loved this excerpt on frozen moments in time, and the necessity of choosing an instant when mapping:

Much of Bunge's cartographic theory is contained in the foreword to the book. Speaking of a historical farm map created for the book (portion above): Maps attempt to integrate over time, that is, maps assume an average span of time. This means that nothing that moves is mapped, and therefore property is inherently preferred over humans. In order to restore truth to the map it is necessary to achieve a fiction of accuracy through an assumption, namely that the map is drawn at an exact instant of time. In this case, the time is June 20, 1915 at 2 p.m. on a sunny day. This fiction freezes the men and horses on the roads, the strawberry pickers in the fields, as well as the crops in rotation and the animals in pasture. This restores life to the dead map of property.

And this, on the relationship between communication technique (old-school graphic design equipment!), choice of study area, and communcation efficacy:

Learning how to make a clean line, lay a rip-a-tone pattern, or design a map with the right Combination of point, area, and line symbols did not seem to be critical knowledge to members of a survival culture. But the school decentralization study made sense. The next three weeks both saved and came to define the potential of the Expedition. The decentralization report - rich in graphs and maps created by Bunge and the Expedition's students - was adopted by a community group and forced the Board of Education to respond to charges that its school districting plans were illegal.

Chris Heathcote blogged a lengthy passage of A.B. Austin's 1931 The Art Of Loitering. I especially enjoyed this pair of sentences about the then-new practice of working class pleasure-driving on weekends, and the new ownership of the roads by cars:

I had really no business to be meandering along their road. My creeping progress might spoil someone's new-found pleasure. For it was their road. It had been built, or rather adapted, for them. Without its glossy blue-black surface, its faultless camber, its generous width, its gentle curves, they could no more pursue their hobby, seize their thrill, than the railway train could run without its track.

Mark Rickerby writes about literacy in coding, and suggests that good programmers are good editors: "I started noticing a single quality shared by all the coders who were producing the most destructive output: they seemed to have a compulsive fear of changing code after it was written."

I have come to believe that the vocabulary of technology is not sufficient to understand situations like these. Primarily, spaghetti code is a literary failing. Through my observations of the developers responsible for these wrecks - they often turned out to be poor prose writers and some were very arrogant about their coding abilities. I believe the core skill that these cowboys lack is that of editing - an instinctive drive towards pruning and tweaking that all good writers know is one of the most important components of literary creation.

On several distinct forms of literacy:

In his further discussion of computer literacy, Kay outlines three core aspects derived from an understanding of English literacy: Access literacy (reading) Creation literacy (writing) Genre literacy (shaping context of style and form).

This was dense.

There is another constituency - self-employed men and women (often barely afloat) - who identify with the "haves," their present economic status notwithstanding. What they have is not so much current wealth, but a history of, or aspiration towards, status, authority, and autonomy. They are not willing to relinquish their past beliefs or their goals for the future. They conceive of themselves as self-reliant and as integral to what was once an undisputed notion of "American Exceptionalism." The number of the self-employed is expanding at a much faster pace than the population as a whole - to some extent out of necessity, as firms impose major cutbacks, forcing employees to go out on their own.

Thomas Frank's The Conquest Of Cool is about the rise of "hip consumerism", specifically as it's connected to advertising and menswear. There's quite a bit of Mad Men in here, and I'm especially interested in the idea that the culture and counterculture weren't quite so separate at the time, and that business culture was going through its own set of tumultuous changes mirroring those of the youth movement. Anyway I clipped a lot of passages here; maybe it means I need to buy the book.

First things first:

Conflicting though they may seem, the two stories of sixties culture agree on a number of basic points. Both assume quite naturally that the counterculture was what it said it was; that is, a fundamental opponent of the capitalist order. Both foes and partisans assume, further, that the counterculture is the appropriate symbol - if not the actual historical cause - for the big cultural shifts that transformed the United States and that permanently rearranged Americans' cultural priorities. They also agree that these changes constituted a radical break or rupture with existing American mores, that they were just as transgressive and as menacing and as revolutionary as countercultural participants believed them to be. More crucial for our purposes here, all sixties narratives place the stories of the groups that are believed to have been so transgressive and revolutionary at their center; American business culture is thought to have been peripheral, if it's mentioned at all. Other than the occasional purveyor of stereotype and conspiracy theory, virtually nobody has shown much interest in telling the story of the executives or suburbanites who awoke one day to find their authority challenged and paradigms problematized. And whether the narrators of the sixties story are conservatives or radicals, they tend to assume that business represented a static, unchanging body of faiths, goals, and practices, a background of muted, uniform gray against which the counterculture went through its colorful chapters. Postwar American capitalism was hardly the unchanging and soulless machine imagined by countercultural leaders; it was as dynamic a force in its own way as the revolutionary youth movements of the period.

Counterfactuals:

The 1960s was the era of Vietnam, but it was also the high watermark of American prosperity and a time of fantastic ferment in managerial thought and corporate practice. Postwar American capitalism was hardly the unchanging and soulless machine imagined by countercultural leaders; it was as dynamic a force in its own way as the revolutionary youth movements of the period, undertaking dramatic transformations of both the way it operated and the way it imagined itself.

On the study of selling out:

It is more than a little odd that, in this age of nuance and negotiated readings, we lack a serious history of co-optation, one that understands corporate thought as something other than a cartoon. Co-optation remains something we vilify almost automatically; the historical particulars which permit or discourage co-optation - or even the obvious fact that some things are co-opted while others are not - are simply not addressed.

On Wired, pretty much:

The revolutions in menswear and advertising - as well as the larger revolution in corporate thought - ran out of steam when the great postwar prosperity collapsed in the early 1970s. In a larger sense, though, the corporate revolution of the 1960s never ended. In the early 1990s, while the nation was awakening to the realities of the hyperaccelerated global information economy, the language of the business revolution of the sixties (and even some of the individuals who led it) made a triumphant return.

On permanent revolution:

The counterculture has long since outlived the enthusiasm of its original participants and become a more or less permanent part of the American scene, a symbolic and musical language for the endless cycles of rebellion and transgression that make up so much of our mass culture.

Apr 16, 2010 5:52am

blog all kindle-clipped locations part II

I've felt for some time that the discipline of Geography is being shifted to the foreground:

Whatever aspect of geography it is that you start with threatens to segue into a discussion on the most polarising topic there is: climate change. Miss Prism would be quick to notice that geography is no longer a polite subject for meal time. Something similar has happened to atlases. They were once placid, unhurried publications with additional information on the colours of national flags. Now atlases are freighted with maps showing cities that are likely to be submerged if the Arctic melts, or projected population growth, or the relative size of countries in terms of CO2 emissions, or areas where water scarcity will be most intense and resource wars most likely to break out. An atlas is beginning to look like a long-term forecast - history before it happens.

The Deflationist: How Paul Krugman found politics

Larissa MacFarquhar's New Yorker article on Paul Krugman's journey into lefty politics. There's some good stuff in here about the technical aspects of academic economics, its relationship to justice, and the progression of knowledge in a discipline: