tecznotes

Michal Migurski's notebook, listening post, and soapbox. Subscribe to ![]() this blog.

Check out the rest of my site as well.

this blog.

Check out the rest of my site as well.

Dec 28, 2005 6:15pm

kittinger

At Hill and Knowlton, we were in the habit of naming our various machines and hosts after satellites. I haven't worked there in over three years, but I've named my new Powerbook Kittinger.

Dec 21, 2005 7:39am

ocean store

OceanStore is neat:

OceanStore is a global persistent data store designed to scale to billions of users. It provides a consistent, highly-available, and durable storage utility atop an infrastructure comprised of untrusted servers. Any computer can join the infrastructure, contributing storage or providing local user access in exchange for economic compensation.

Well, except the economic compensation bit. If well-handled, this idea could take off like its close cousins DNS and Bittorrent. If poorly handled, someone else will do it because it feels like an idea whose time is close at hand. Google's already deployed something like it with Google Base, but they're blindly focused on its utility for "content owners" (They sound like the MPAA jackass on NPR this morning braying about "entertainment product").

OceanStore is touching on the same attitude towards storage I'm seeing in projects such as Atop Axiom, namely a slight distancing from SQL towards purer object stores. This move loses the big advantage of SQL's indexes and searches in favor of distributability and fuzzy degrees of confidence. Your credit card company isn't about to switch (SQL solved their problems neatly almost 30 years ago), but projects like ForwardTrack could play.

Dec 11, 2005 1:15am

pressthink groks bob woodward

In theory we send these people out to report back to us. Some of them penetrate the secret worlds of national security and government policy-making on our behalf. But if they keep going into the secret world they can come under the gravitational pull of another planet--the people in power, the secret-makers themselves. They're still sending back their reports, but have left our universe, so to speak.

It's a fascinating essay about the effects of access on insight. In this case, Bob Woodward comes under heavy scrutiny for his unique combination of top-level access and personal incuriousity. Arianna Huffington calls him "the dumb blonde of American journalism, so awed by his proximity to power that he buys whatever he's being sold." It's easy to forget that Woodward's big break in the Watergate case came at a time he was a lowly Metro desk writer, an unexpected source of pressure.

A few things occured to me as I read this:

- The cyclical (or pendular?) nature of access. Yesterday's outsider is today's insider. Today's bomb-throwing bloggers at the gates of the mass media are going to be tomorrow's establishment commentators. Lee Gomes describes this change in a recent WSJ article.

- The value of independents. Yesterday's announcement of Yahoo!'s purchase of Del.icio.us is a bit of a bummer. It'd be nice to have strong web firms that aren't part of the GYM, but right now everyone's getting bought. Like Bob Woodward's investigative journalism, enclosure within Yahoo! can't possibly be doing much good for anyone connected to Del.icio.us except its employees and investors.

- The value of independence. Keeping out means keeping perspective. How many fantasy & sci-fi stories are based on the premise that power corrupts, and diving too deeply into black magic affects your ability to reason? This is tremendously important (in my industry, anyway) at this moment that all the little guys are either bought, or trying hard to be. I ought to try to keep this in mind as I head for the lions' den this March.

Dec 7, 2005 7:57am

tagging on the rocks?

In How Tagging Could Gain Ground, Philipp Keller laments a lack of "new, visionary, inventive articles on tags."

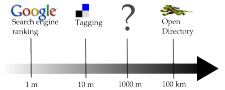

Keller argues that he's missing a "middle-distance" view of information, somewhere between folksonomic free-for-all of tags and the hieratic temple of DMOZ. His graph puts Google at the far-left, on the assumption that the search engine rewards recognition rather than novelty searches:

I have a few resopnses to this argument:

First, I think the results of Keller's quick survey of Google vs. Del.icio.us (using the search term cryptography) aren't representative. Del's audience skews heavily geekward, so it feels natural that links about math, technology and secrecy would be represented well. Picking another topic out of a hat, the results aren't so clean-cut. Google's results for organic farming lie at about the same level of generality as its results about cryptography. There are a lot of them, many fairly basic. Narrowing searches by adding terms (e.g. politics, how-to) helps squeeze the volume down a bit. Meanwhile, Del returns just 10 links, total. Hope they're good. Conversely, the quality of results for terms like web or blog in Del.icio.us is also generic and fairly undifferentiated. Google maintains a middle-distance from the user in more situations, while Del.icio.us swings wildly based on the composition of its user base.

Second, I'm losing faith in the potential for staying informed from tagged web content alone. I've been subscribed to several Del tag searches via RSS (e.g. attention, maps, infographics), and today I unsubscribed from the last of them. The data was far too general: I was either getting a very slow trickle of information, or a torrent. Attention in particular picked up sharply from fewer than a half-dozen items per day a few months ago, and accounted for a substantial percentage of my RSS volume. Many of these items were repeats or pointers to the Attention gang's own writings. Interesting, but I get this stuff via other channels, and wasn't learning enough new stuff.

Third, I think these two points show that a social bookmarky whatchamacallit like Del.icio.us has a population sweet spot. The quality of the links was highest when the system was populated by hardcore early adopters, and dropped when middle of the bell curve moved in. In particular, the quality drop was characterized by echoes and repetitiveness. I would argue that Folksonomy Helps Me Stay Informed On a Given Topic best when the diversity of a given tag/user/resource population is high and the headcount low.

Fourth, Keller is seriously on-target when he says that the "missing in-between view can be won by analyzing tags" - his love cluster example is like a textbook example of aspects in linguistics, showing the nuances of social meaning in a term as general as love. I expect that Del.icio.us could do something like this too, but the API terms are too limiting for external experimentation, and the math is hairy. If anyone had the gall to do this on a large scale, it'd be Google - they're already treating link text as a de-facto tag on a page, so they're arguably the oldest tagging outfit on the block.

In general, I don't think that tags as a concept are sliding into irrelevance, as Keller seems to suggest. I think the bigger picture is that tags are (currently) one kind of intentional choice that can be expressed digitally. There are other such choices that may aid in search, and I imagine they won't be called "tags". There is also the approaching time when tags stop being reflective of human choices, as automated others-tagged-this-with schemes become prominent, and spammers decide that Del.icio.us Popular is a good place to be.

Dec 1, 2005 2:14am

things fall apart

I've been reading this brilliant essay by Philip K. Dick in small pieces all day: How To Build A Universe That Doesn't Fall Apart Two Days Later. There's a lot of meaty conjecture on the appearance of truth in fiction, and Dick's own run-ins with quasi-religious coincidences arising out of his books Flow My Tears, The Policeman Said and Ubik. It's a lot to digest, so I won't bother interpreting.

Q. What are science fiction writers "good at?"

A. Dick:

I can't claim to be an authority on anything, but I can honestly say that certain matters absolutely fascinate me, and that I write about them all the time. The two basic topics which fascinate me are "What is reality?" and "What constitutes the authentic human being?" Over the twenty-seven years in which I have published novels and stories I have investigated these two interrelated topics over and over again. I consider them important topics. What are we? What is it which surrounds us, that we call the not-me, or the empirical or phenomenal world?

Nov 26, 2005 5:32am

seceding from googlistan

While in Europe, I decided to secede from Google.

I'm starting to get creeped out by the volume of information they're hoarding, influenced by the rhetoric of the AttentionTrust, and doubtful of the longevity of the "Do No Evil" company motto. Someone smarter than I said: "When all the good-guy founders have left the company, Google still has your data."

I thought of a few ways to accomplish this:

- Use robots.txt to disallow Google's search bots. This is the naive approach that relies on search engine trustworthiness.

- Use some variant of khtml2png or ImageMagick to render all text content on my site as GIF text, making it opaque to bots but usable for humans. This is a more difficult approach that will work until the day Google merges their book-scanning project with their search engine.

- Stop blogging.

Ultimately, I decided it was a silly idea. I don't get a ton of search engine traffic (except for "giant ass") and I don't much care. The stuff I write here is public by definition, and HTTP responses aren't an aspect of Google data retention I'm afraid of. What I'm really worried about is the correlation between searches pegged to my browser cookies, mail processed through the GMail account I don't use, instant messages transmitted through the Google Talk account I don't use, and usage information about my website culled from Google Analytics and Google Web Accelerator.

Meanwhile, the proprietor of WebmasterWorld has seceded for real. Black Hat doesn't get it. It sounds to me like Brett is trying a controlled experiment, to see how traffic on the site is affected by dried-up search engine hits. The site in question is a conversation forum, and I can imagine that it would be desirable to exercise some control over the kinds of people walking into your salon. If you want to keep the conversation on the level, you discourage casual participants and bias in favor of dedicated readers and writers. If I were in his position and made this decision, that might be my rationale.

Nov 24, 2005 7:45pm

strange decision from 37S

This is currently old-news, but I'm still playing catch-up after my travels. 37 Signals has disabled comments on their weblog. Can't say I'm their biggest fan, but I always made a point of reading their blog, mostly for the discussion in the comments. I do this with Slashdot, and I'm starting with Digg, too. Sites with vocal and frequent feedback are like the watering holes of the internet, useful more for the other visitors they attract than the inherent local value they provide. Given that 37S had just recently started up their Deck advertising network (...if you want to reach the influencers, buy a card...), I can't understand why they would remove a major traffic driver to their site. Now, there's nothing on the website that's not already in my RSS reader, and the quality of the Signals' own postings have show a marked deterioration lately anyway. I really don't have a reason to swing by anymore, which makes ads purchased in the Deck less valuable. Eric has also noticed that more NYTimes articles are beginning to disappear behind their Times Select "pay wall," another instance of forum owners sabotaging their own relevance.

Nov 22, 2005 3:46am

drang nach oakland

I'm back home in CA.

Last week I had the good luck to attend Design Engaged in Berlin. It was a mind-expansively great time. Thirty-five designers from Europe, Asia and the U.S. converged on Andrew's second micro-conference. I heard so much good stuff about last year's Amsterdam event that I just had to beg or steal my way into this one. The reward was a weekend-long series of talks and directed conversations which covered a much wider range of topics than your typical tech/nerd conference. My own interest was piqued by design for our post-cheap-energy future (Adam read a little Jacobs and Kunstler), exercise machines as a joy (Dance Dance Revolution) instead of a chore (24Hour Fitness), mechanizing thoughtwork, metals which snap into liquid form at low temperatures, and Malcolm McCullough's musings on ambience and uniformity. I don't recall a single official invocation of wiki's, long tails, or anything-onomies (though Thomas was there).

My favorite aspect of the whole affair was the design of the conference itself. Despite an inauspicious beginning with chairs arranged in neat rows, the scheduled 20-minute presentations frequently evolved into conversations once Q and A got started. The conversation-starter sessions were overall the most engaging for me - these were talks on the short side that presented a few themes or arguments (or fun crafts projects) and then yielded the floor to the rest of the group. They had a call and response feel to them that I greatly enjoyed, with just enough structure to keep the thing flowing but not so much that I was ever forced to travel to the laptop-planet for a break. Two participants planned events instead of presenting - Matt designed a button-trading game which won us (Stamen) a Nokia N90, and Mike set up a series of neighborhood walks that bagged us (myself, Gem, Anne, Andrew, Joshua, Liz) a personal tour of Schoenburg from Erik Spiekermann.

I find it interesting that every time I detour to Poland, I fly back with another Norman Davies tome in tow. This time it's Heart Of Europe AND Rising '44, but I've only had time to crack the first. Adam's talk Design For Decline seriously hits home with me, because when I visit Wroclaw (the town, the book) I'm constantly made aware of the fact that 60 years ago the city was a Dante's Inferno of post-war terror. Buildings are riddled with bullet holes. Neighborhoods where family members live were attack vectors of the Red Army. Expelled Germans come back to check out their old homes. For all my good-O'Reillyite optimism about our Google-Wikipedia future, it's only been a few years since the last time Europe convulsed into total war. It's hard to trust in unfettered progress when you're from a country that blinks into and out of existence every few generations.

Nov 6, 2005 2:23pm

polish podcasting

Right now, Polish radio station Tok FM is finishing up an informational broadcast about podcasting and internet radio. It's interesting (to me) that they make an effort to demystify the process - rather than the "for wizards only" take I often hear in American descriptions, they're getting into specific technologies, advantages and drawbacks of various streaming and distribution methods. I think it may be a result of all the English terms ("podcast", "stream") and the need to explain them without relying on existing metaphors.

Neat.

Nov 6, 2005 12:41am

less is crap

What's with the glorified self-balkanization of software?

I'm in Poland, running through my feeds for the first time in a week. Half the stuff I'm reading from Paul Graham, 37 Signals and a million others is undigested "less is more" zen-of-development hoo-hah. It may just be that I'm tired and jetlagged and supposed to be sleeping, but this stuff is majorly rubbing me the wrong way. It's like job #1 for all these guys is gleefully cutting down their workload by proclaiming philosophical opposition to planning, preparation, or listening to their users. I realize this is a great way to get a wee startup out the door, but the approach has no apparent respect for software with more than ten users and developers. Some jobs, like disaster relief or administering a country or designing a car are big by nature. I'll never forget our two visits to BMW's design & engineering center in 2004 and 2003, when I witnessed for the first time a large, highly synchronized operation manned by hyper-competent individuals acting synchronously towards a central goal. It was poetry in motion, a sad counterpoint to the incompetent bumblefuckery of FEMA and other federal agencies botching wars and rescues.

The real problems of the 21st century are worlds-apart from the American business press' sudden obsession with autonomous smallness, agile and open source software. I'm curious to see the focus of small, distributed teams pointed at big problems with inherently big solutions.

Nov 1, 2005 5:59pm

arrrrgh, firefox (redux)

A few weeks ago, I decided to take the Attention Trust Recorder for a spin. The extension only works in Firefox, so I changed over from my beloved Safari for almost two weeks.

I had a few complaints about the switch from the start, some of which haven't haven't faded wih time.

- I'm less flummoxed by Firefox's tab-switching keyboard shortcut. After a few days, I grew accustomed to the control-tab combination, though I still find it somewhat uncomfortable. It's definitely no longer alien to me.

- I stopped noticing the Keychain lack after a few days of being bothered for passwords I couldn't remember. Fortunately, I use the same username/password combo for my unimportant accounts (newpaper logins, etc.) and Keychain provides a way to read my stored passwords. It would be better to keep these all in the system-level store (what do I do next time I switch browsers?), but for now it's fine.

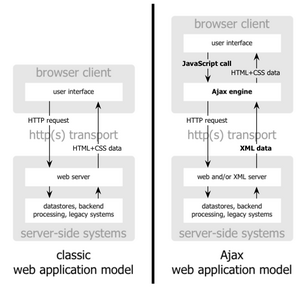

- I sort-of dealt with the stable browsing history issue by adding cache persistence headers to Reblog's output. Unfortunately, this causes the browser to fetch pages from disk instead of network, but makes no difference to the browser scripting engine's lack of stored state. I've seen a number of people write javascript libraries for storing Ajax state - I thought this was a ridiculous idea until I saw that Firefox made it necessary to engage in such repulsive hacks.

- General "macness" is still a problem. Firefox feels like it's been brainlessly ported from Win/Linux land. Camino has more promise in this area, but I doubt it can use the same extensions that Firefox can. Meanwhile, a new annoyance crept up in Firefox focus handling: certain actions caused my browser to lose focus on elements or windows, especially when I did anything with tabs. I have't been able to discern a reproducible pattern, but I've started to develop a sixth sense around the browser quirks. I find myself engaging in technological coping behaviors, which helps the symptom but really shouldn't be necessary in the first place. Boo, Firefox.

Meanwhile, Ed from Attention Trust wrote a short post basically agreeing with my frustrations, so maybe we'll see a Safari version of the recorder. My personal preference would be to skip the browser consideration entirely, and write a local HTTP proxy that's browser-agnostic.

Back to Safari!

Oct 31, 2005 11:52pm

boom-boom-boom

Eric:

We're quietly sitting in the office, and hear a BOOM, which is not that big a deal in the sunny Mission. Then another, and then another, and then another, in rapid succession, followed by sirens and helicopters and then more BOOM BOOM BOOM, and finally we turn down the techno and Mike says to me "um... do you think we should go take a look?" We head to the roof and it's not quite the giant robot stomping on buildings that we had imagined, but pretty close - smoke belching from a building on South Van Ness & 16th (about two blocks away) & periodic BOOM BOOM BOOM accompanied by huge balls of flame.

Oct 31, 2005 1:50am

rassafrassin'

Totally forgot to catch Burtynsky when his photographs were shown at Stanford, and again at 49 Geary.

Oct 28, 2005 4:45pm

mixing metaphors

There's a spirited debate winding down at 37 Signals about Flickr and user-generated content. A lot of the comments seem to be dangerously mixing metaphors and points of view to obscure the non-issue at hand.

Jason: "Now, hundreds of thousands of people are taking photos, uploading them to a site like Flickr, making Flickr better, and Yahoo is reaping the financial rewards, not the photographers. That's a pretty big shift. Yahoo is making money off the backs of the collective camera."

Jason, contradicting himself: "I'm not saying there's anything wrong. I'm just saying the whole user-generated content model is an interesting one one where the public does the work and the company gets the financial reward."

The Marxist revolutionary tone sure makes it sound like something is wrong, no? Content-uploaders of the world, you have nothing to lose but your chains.

Steve, contradicting Jason: "What Flickr (and others) have done is shown that photographs are cheap. They're not paying you because if you left, there'd still be thousands of other people who are willing to send in free photographs."

Benvolio, later: "Jason is hinting at a new business model. something like: users sign up to flickr, flickr makes cash from ads hosted on said users images, flickr gives a cut (between 1-10%) of the cash from these ads to that user. not only is this sharing the love but it provides an incentive to flickr users to create, load and promote great content."

Cripes.

I'm seeing a few problematic metaphors in this thread:

- Money is love.

- Photos are content.

- Flickr is a community.

Jason's position in this debate basically boils down to: "Flickr should pay their users for their participation, so users will have a greater incentive to upload quality photos. Uploading quality photos imroves Flickr, which translates into higher advertising revenue for Flickr and Yahoo." Terms like "better" and "share the love" are applied to ad revenue and micropayments, which strikes me as innappropriate.

I believe that Flickr has been successful because of the simplicity of their idea: provide quality plumbing for sharing photographs, in return for non-intrusive advertising or old-economy money. No new-new-economy language about content, community or peer-production, just sharing photos with your friends. The specificity here is important, and Flickr has always seemed to avoid describing their service as a marketplace, a platform, or a community. Of course it is all those things (and many more), but I suspect that is so because their eye rarely strays from the ball. The fundamental reason people join is to share photograhs, while everything else is a nice bonus. Steve (above) is right - there are thousands of people sending in photographs, but they aren't sending them "to Flickr", they're sharing them with their friends. Photographers who want some scratch for their work sell to Corbis or arrange for licensing on other channels.

The last thing Flickr users need is an incentive to upload - a year's worth of frequent massages proves it. People don't need to be convinced to act socially, all they need is a supportive and malleable environment to act in. Flickr can ignore that at their peril.

Oct 26, 2005 6:33pm

keeping change

There's a connection between BofA's Keep The Change program and the Attention Recorder, but at the moment I can't articulate it.

Oct 26, 2005 4:30pm

reblog 2.0

Reblog's 1.0 announcement promised "push-button republishing for the masses", and we've been doing pretty well following that track for the past year or so. Last night, we pushed the button on Reblog 2.0. The concept behind the software has always been a form of attention cagefight: many feeds go in, one feed comes out. Reblog closes the loop between piqued interest and publishing by making the act of marking a link for publication a one-step affair. We've long thought this was a pretty interesting addition to the standard functionality of an RSS reader, and I'm surprised to see that in late 2005, there's not a lot of other software that performs the same task.

In the meantime, Reblog has started to see slow but steady growth. Eyebeam and Rhizome both use the software to publish net art blogs, and Eyebeam has an explicit "curator" role in the fortnightly reblogger. Global Voices Online has expressed strong interest in adapting the project to their multitiered reblogging operation, and Reblg from Broadband Mechanics borrowed the term for a similar idea based on microformat republishing from within the browser. A lot of these uses place Reblog in the role of plumbing: like the mixer in your shower, it's there behind the wall but you never see it. Reblog's output is typically meant to be fed into a MoveableType or WordPress plugin, where it surfaces as a series of blog posts on an HTML site annoted with "Originally by so-and-so from..." attribution notes.

We've been thinking a bunch about expanding this role a bit, so that the RSS output from Reblog carries more weight. What happens when you chain a bunch of Reblog installations together, so that the output of one person's attention stream becomes fodder for the next? You get an omnidirectional passive e-mail substitute, great for washes of "FYI" type information and general interest sharing. In a lot of use cases there's no reason for the output to ever make it to a regular blog. Small, distributed groups can use the software to share information among themselves, and only escalate to the relatively disruptive e-mail level when action or response is required. Alternatively, chaining feeds together could result in a progressive-filtering mechanism, reducing the complexity of a thousand feeds into a few pertinent pieces of information through a pyramid of editors.

So, with the interest of making Reblog a more flexible sharing tool, we worked on version 2.0 with a few broad goals in mind:

- Usability: Reblog's user interface needed to help the end-user manage large numbers of feeds more effectively. Tagging feeds & entries has proven to be the most flexible way to accomplish this. We were also interested in ways of making the interface look less... dorky. There was a lot of techno-cruft that just had to go.

- Extensible Input via a public API: Greasemonkey is pretty damn cool, so we thought it'd be useful to provide for a way to perform common Reblog editing actions via a programmable API. XML is boring to parse, so Reblog now has a JSON-RPC API exposed.

- Extensible Output via support for plug-ins: We were strongly interested in seeing some sort of plug-in ecosystem evolve around Reblog. If the software's useful, it makes sense to provide for a way to adapt it to specific uses. My own guide for this has been Blosxom, a simple blog engine written in Perl.

One huge change that became apparent as we wrote 2.0 was the need to post your own entries, independent of any particular feed. Historically, Reblog 1.x assumes that the target blog would be used for this purpose. In 2.0 we've added a "post item" feature, which acts as stripped-down Blogger or Del.icio.us analogue, and handles one of the primary uses cases suggested by Reblg. This idea has been gaining some traction recently, most conspicuously in Mark Pilgrim's microformat atom store concept. Mark decribes the idea as "having your own private database", but my own takeaway from Del.icio.us has been the value of making such micro-information public. Reblog is built to share. Bud Gibson has been referring to this idea as the xFolk Veg-o-matic, a slice & dice greasemonkey-based browser augmentation that trawls websites for microformatted links and posts them into Reblog for you.

We believe this is pretty cool.

Oct 25, 2005 3:58pm

social vulnerabilities

The always-interesting SEO black hat writes:

There are so many vulnerabilities in Media 2.0 applications that our heads are spinning trying to decide the best 10 to exploit. The problem? People are not designing systems with the black hat / spammer in mind. Its like setting a democracy and saying vote as often as you like. WTF do you think is going to happen? ... We in the BlackHat community often throttle how abusive we are on certain exploits. Part of the min/max game is knowing how hard you can ride an exploit before you break the underlying application. (Word Inventor Mark Cuban on Splogbombs)

Oct 24, 2005 8:56pm

dumb 2.0

Here we go again:

...a new startup called Go BIG Network wants to one-up LinkedIn by focusing on transactions over a network, rather than the network itself. ... The service helps entrepreneurs broadcast needs across their personal networks for free, and across the whole network for a fee (yet to be implemented). (Linking Startups to VC, Red Herring)

This idea didn't work when it was called Vencast, and it's probably not going to work now. Social networks (the meatspace kind) and recommendations work because they're personal, out-of-band, serendipetous. Formalizing such connections into a "Craigslist for businesses" will hit the wall hard. The kinds of people who have good ideas and the smart people who recognize them keep a step ahead of this sort of factory commoditization of the creative process. Last time around, the appearance of such "marketplace" meta-companies was a sign of the impending crash - what does it mean in 2005, when the declaration of Bubble 2.0 is only a month old?

UPDATE: Vencast was not the first: "By compressing the time frame of the typical VC-entrepreneur dance, VC.COM reduces the lamb-to-slaughter cycle to days rather than months."

Oct 24, 2005 4:18pm

"zak001"

My brother's first published article was just posted at Palantir. If you are interested in painting Haradrim, read on.

Oct 22, 2005 6:42pm

irony defined

I keep finding links to Meet The Life Hackers, a New York Times article about attention and interruption, but I can't seem to scrounge up ten minutes to read the damn thing.

Oct 21, 2005 6:26am

arrrrgh, firefox

I'm taking a week to test Attention Trust's extension with a vanilla (for now) installation of their toolkit. I'm excited - the idea of recording a live stream of all my browsing activities to a location I control is super interesting.

So far, so good - all of my "clicks" are being recorded along with HTTP response code and cookie status (not cookie content). I'm using the default ACME-style display to show my browsing behavior, but it's going to be time to modify it to show some difference between recent activity and overall activity. The extension logs all "normal" website visits. It skips subrequests such as XHR, images or stylesheets, and I had to dig around a little to figure out why I wasn't seeing logs of my secure browsing activity - these are disabled by default.

The extension only works in Firefox, and an Internet Explorer version is planned, with no announced release date. I can't possibly recommend a Safari version strongly enough. I've had to switch to Firefox for the duration of my experiment, and I'm really homesick.

A few things that Safari does well, which Firefox does not:

- Comfortable between-tab navigation keyboard shortcuts. Firefox has chosen a finger-bending combo of ctrl-shift-tab.

- Keychain integration - I'm not interested in Mozilla's password storage ghetto, I want to use the system-wide one that already has all of my passwords stored.

- Stable browsing history - when I use Reblog to read my feeds (all day, every day) I like being able to return to the feeds view in Safari without a required page reload. Firefox hits the server every time, which takes time and loses my keyboard-controlled focal spot on the list. This may just be a matter of hacking out some more informative cache-control headers in Reblog.

- General "Macness" - Firefox looks out-of-place, with its own non-Aqua button designs and interface elements, presumably intended to minimize differences between Mac, Linux and PC implementations. Fuck that.

I'll return to these four gripes as the week progresses - some of them may be simple acclimation issues. For now, I'm continuing to give Firefox a try because the attention recorder is the first strong reason I've had to do so.

Oct 15, 2005 7:01pm

painting videos

Duane Kaiser of A Painging A Day has complete videos showing the making of several paintings available on his site.

I wrote a little about time-lapse views of art production last month.

Oct 14, 2005 7:41pm

radii are beatiful

I find these images both significantly more pleasing than splines:

The diagrams in The Shape of Song display musical form as a sequence of translucent arches. (Martin Wattenberg, The Shape Of Song)

Flight density during one week between international airports. Herdeg, Walter. Graphis Diagrams. 4th Expanded ed. Zurich, Switzerland: Graphics Press Corp., 1981. (Visual Complexity)

Oct 14, 2005 12:14am

ends vs. means

From a comment on The Decembrist (scroll down):

Mature people who actually care about the world don't ponder the question "do the ends justify the means," because they know that there IS no end.

Oct 13, 2005 11:49pm

e-mail 2.0

According to Tim O'Reilly, Web 2.0 is about collaboration, data control, participation: warm and fuzzy up-with-people sentiments. I think E-Mail 2.0 will be very different: automation, delegation and other efficiency-oriented goals.

If GTD is any indication, I bet e-mail is about to see an automation renaissance. I've experimented with mail client customization before, and this week I've set up a toy system to experiment with transposing certain GTD ideas (trusted system, ticklers) into automated e-mail actions. My memory for responsibilities and appointments is notoriously awful, and I've not had the best luck with personal-organization tricks such as the hipster PDA - turns out they require a minimum amount of self-discipline.

I want to be able to completely forget about my responsibilities until a specific time in the future when they become important, so I'm playing with the idea of automated e-mail assistants. I've set up remind@teczno.com as a time-delay mail helper. Any message sent to that address is checked for a date and time on the first line - I use PHP's strtotime() function, and it happily accepts inputs such as "tomorrow 1pm" or "november 1 8:00am" (note that this only works as-expected in the U.S. Pacific time zone). A response is immediately dipatched to the sender, with a promise to send a reminder at the specified time in the future. When that time arrives, the original mail is returned.

Combined with the conversations hack, my mail client is starting to take on the character of a diligent yet stupid personal assistant. I've become more confident in my forgetfulness, now that I know I can remind "future mike" of his responsibilies. The reminders take on the context of their sender, so requests from my mobile phone return to my phone. The script is CC:-aware, so any addresses included in the CC: field are in on the reminder. I can imagine expanding this system to be secretly social - there are other needs suitable for mail that could benefit from delegation. For example, GTD relies on "projects" being decomposed into "next actions", which is significantly difficult. A decompose-bot could accept mails with to-do items in the body, and delegate them anonymously to other system users for decomposition into discrete actions. Users of the system would periodically receive mails asking them to decompose someone else's projects into actions, spreading the difficulty of introspection out to disinterested strangers. It's tit-for-tat meets Bittorrent meets porn-incentives for CAPTCHA-cracking.

Until then, feel free to try out Remind-O-Bot. Remember:

- Date and time on the first line of the message

- Sender gets the reminder

- CC: anyone else you may wish to pester

Oct 12, 2005 2:57pm

microsoft's RSS icon

Microsoft is trying to figure out how to represent RSS with an icon.

Their criteria for success are:

- Convey newness, activity, subscription, continuity

- Build on the orange rectangle

- Avoid text

I'm just surprised that they managed to fit a new economy swoosh into the mix:

Oct 10, 2005 4:25pm

the uncool classes

via Kottke:

From London and Berlin to Sydney and San Francisco, civic authorities agree that the key to urban prosperity is appealing to the "hipster set" of gays, twentysomethings and young creatives. But the only evidence for this idea comes from the dot-com boom of the late 1990sand that time is over.

... the lure of "coolness" leads cities to ignore the fundamental issues - infrastructure, middle-class flight, terrorism - that have so much more to do with their long-term prospects. Cities once boasted of their thriving middle-class neighbourhoods, churches, warehouses, factories and high-rise office towers. Today they set their value by their inventory of jazz clubs, gay bars, art museums, luxury hotels and condos.

(Uncool Cities, by Joel Kotkin)

I'm finally reading Jane Jacobs and this article resonated strongly with me. A few other data points:

- San Francisco mayor Gavin Newsom has warned that recovery from a major earthquake may be more difficult, because most of SF's firefighters live outside city limits, many of them across the still-vulnerable Bay Bridge.

- While walking with Eric near 20th and Guerrero, I was surprised to see a cobbler's shop in a neighborhood otherwise full of boho coffee shops and gourmet groceries. I was subsequently surprised by my surprise, because such businesses ought to be plentiful in a well-populated neighborhood, and the lack made me wonder where the locals actually go to take care of such everyday errands as fixing shoes. The cobbler's storefront wasn't exactly booming.

- Still-devastated holes in the ground on the sites of buildings razed to make room for office space at the tail end of Bubble 1.0. Development in the Bay Area seems to occur at the block level, rather than the building level. I was happy to see an apartment building in Berkeley this weekend that had been injected into an oddly-shaped lot, but the general pattern I see in SF and Oakland is for a developer to buy an entire city block, knock down existing business, and put in a "mixed-use" monster.

Oct 9, 2005 3:24am

Noguchi Filing System

Found today via Vox:

A book I read recently has prompted me to try a rather unconventional filing system, the system proposed and used by Noguchi Yukio, an economist and writer of bestselling books about such things. Implementation of the system requires the user to discard many conventional notions about how to store paper documents. (The Noguchi Filing System)

The basic method is to stick your papers to be filed into letter-size envelopes, and store them vertically in order of filing. Any pair of items on the filing shelf will always be in newest-on-the-left order:

A detail that escaped me at first was that folders removed from the shelf don't go back where they came from, but back to the beginning. I'd like to say that I should give this a try, but I've already been beta-testing a spatially enhanced version for most of my adult life: any mail, papers, or books that I wish to file get put down right where I'm standing. Spatial memory is preserved through location, and temporal cues build up over time in the form of stacks of paper and envelopes and crap. My dad uses a limited spatial version, based on the surface area of the kitchen table. It's augmented with a primitive sort of "wiki", in the form of doodles, notes and sketches on most of the white envelopes.

Kidding aside, I like the temporal memory aspect. It's worth noting that an early decision I made with Vox was to organize by first-appearance from the left. Old stuff hovers on the left, new things fly in from the right. When the project was looking at Google News, this choice was strongly accentuated for the longstanding big names such as Powell, Bush, or Blair.

I also like the no-brain feature. "Filing" is divorced from all categorization costs, simpler even than the free associations of tagging. This could probably work very well for small-to-medium organizational needs, but would grow maddening above a certain point. I can imagine that the system would work optimally with a very small set of predefined categories implemented as separate shelves, though all of this raises the question: how to implement something like this for digital files?

Oct 5, 2005 3:21pm

out of context

Mr. Vander Wal met Mr. Migurski in person that month in San Francisco. "It's difficult to explain to my wife what I'm doing, who these people are or how I've met them," he said.

Oct 2, 2005 6:38pm

what's not web 2.0

37 Signals posts "What isn't Web 2.0?" Secrecy and buzzwords among other things.

you could have skipped the filppant list and just said that web 2.0 is a stake in the ground of developing the semantic web.

...which got some rude "buzzword-bingo" retorts. But I like this idea, so here's part of my response:

It's easy to forget that so-called buzzwords ("collaborative", "transparent") have a history - they *mean* something, even if that meaning tends to be obscured over time by thoughtless use. ...the absence of VC funding, secrets, hiring binges and economic incentives were preconditions responsible for the truly transparent & collaborative development surge that got us where we are now.

Sep 30, 2005 4:44pm

corpse bride

I love reading sentences like this in articles about movies:

For Corpse Bride, data wrangler/computer programmer Martin Pengelly-Phillips wrote a utility in Python computer language to convert the XML data into a flattened reel. The shots get validated for naming and length, and checked against the list of known shots in the editorial database in FileMaker Pro. In Python, a series of AppleScripts are created to update the editorial database with the most recent cut.

Sep 20, 2005 4:02pm

72hours tagged navigation

I'm putting together some emergency kits for when the big earthquake hits and FEMA takes a week to get north of Fremont. I noticed that San Francisco's 72 Hours site has a really interesting tagged navigation concept.

The site plan is broken up along two major axes: task-centered overviews across the top (Make a Plan, Build a Kit), and topic-centered details below. Each task page (shown here) is tagged with the topics it touches, for example Build a Kit highlights the Pets, Food, Water, First Aid and others. This gives a helpful, quick point of entry into the first-level goals that need to be accomplished and uses active verbs to do so.

These quick entry points are augmented by details within each topic page, which provide extra information not covered by the quick-start pages.

The whole sites uses guessable, crawlable URL's, e.g. "pets.html". There's no side navigation bar, and it's beautifully focused on the goal. Even site name serves as a helpful reminder of the amount of time you should be prepared to live on your own, and frames all the advice within.

Sep 14, 2005 3:33pm

bureaucracy = death?

I just sent this to Seth Godin, in response to Bureaucracy = Death:

An interesting counterpoint comes from Bruce Schneier, regarding the security lessons of Katrina: "Redundancy, and to a lesser extent, inefficiency, are good for security. Efficiency is brittle. Redundancy results in less-brittle systems, and provides defense in depth. We need multiple organizations with overlapping capabilities, all helping in their own way..." (Security Lessons of the Response to Hurricane Katrina).

I've been thinking a lot about bureaucratic efficiency in Katrina's wake, and about the different kinds of organizational efficiency. The Grover Norquist / Newt Gingrich calls against government bloat seemed to be well-intentioned, but it's clear from the Department of Homeland Security that the current Admin is looking for top-down efficiency, initiated by fiat and ruthlessly optimized. This seems to have resulted in the kind of brittleness that Bruce talks about, and was predicted by people familiar with distributed networks of any kind.

What would happen if there were a CNO at FEMA? He'd probably be somebody's fuckwit college roommate appointed from the GOP fundraising talent pool, that's what. Brittle.

I've been thinking that a better sort of efficiency is bottom-up, the kind of deep competence practiced by educated, informed people doing their jobs well. You still have a bureaucracy, but with a culture of basic competence that encourages and promotes efficiency at the lowest levels of the ladder. Turns out this is a lot harder to implement, because the solution starts at the K-12 level and moves from there. Progress is measured in decades, not political terms. If there is any lesson from computer science that I wish society could understand, it's this small-pieces-loosely joined design philosophy that makes the Internet work and Unix a stable operating system.

Sep 12, 2005 9:30pm

goodbye, flickr

I decided to delete my Flickr account today. I hadn't uploaded an image in a month or two, and my only exposure to the site was through various RSS feeds. It felt strangely satisfying to hit the big delete button without backing up or otherwise saving photos.

Sep 8, 2005 8:20pm

coast to coast

I'd like to see Maciej's paintings animated through the production process, but instead I will look at the front pages of the San Francisco Chronicle and The New York Times:

Sep 5, 2005 4:28pm

two things before I bail

1. I understand podcasting now

Still haven't successfully listened to a single talkie-podcast, except for one Adam Bosworth talk from IT Conversations where he referred to "this chart" at point and I threw up my hands & turned it off.

But Ryan pointed out DnB Sets, which offers a podcast of drum & bass and breaks sets, most an hour or more in length. This is a really great way to get music! iTunes handles all the details, and every morning I get two or three hours of fresh music to listen to and throw away when I'm done.

2. WTF happened to George Bush?

I'm not a fan of the President, but I thought his general reaction to 2001-09-11 was pretty admirable. Within a day he was front & center acting like he was in control, and imparting a general sense of getting-shit-done. Guiliani helped, too. I understand about photo-ops and PR, but putting on appearances is important.

This week, we have a mealy-mouthed dingbat flying around in his little airplane, listing how many buckets of ice are going to be sent to NOLA and staging absurdist theatrical on-camera "briefings" with the head of FEMA to reiterate the obvious. We know the situation is heart-rendingly fucked - get into that helicopter behind you and go do something about it!

Observe:

"I can see my house from here!"

Aug 31, 2005 6:25pm

two reblogs in the wild

Mike Frumin brought these two sports-focused Reblogs to my attention this morning:

- Sunday Story: NFL news, injury reports, rumors, fantasy football cheat sheets, player rankings and more.

- Hoop Log: Basketball news, with all posts categorized by team and player. Very well-designed, nice orange date slugs.

Both sites are maintained by Kyle Bunch.

Aug 28, 2005 2:16am

freedom and discipline

Sorry for the recent interlude of radio silence, but many interesting developments in Stamenland have conspired to fill my time with meetings, phone calls, conversations and plans instead of the usual aimless hackery.

William Blaze just released an essay on Web 2.0, with a few choice observations on web-enabled API's and other hot topics. Some selected excerpts:

...the web is different now, but the big differences aren't necessarily found in those prosaic "information wants to be free" ideals, which actually stand as one of the biggest constants in web evolution.

...

What really separates the "Web 2.0" from the "web" is the professionalism, the striation between the insiders and the users. When the web first started any motivated individual with an internet connection could join in the building. HTML took an hour or two to learn, and anyone could build. In the Web 2.0 they don't talk about anyone building sites, they talk about anyone publishing content. What's left unsaid is that when doing so they'll probably be using someone else's software.

As a tool-builder, I'm somewhat biased towards the idea that good software leads to good publishing. In the beginning, it didn't really matter how you published content. Webzine '98 lists the minimal entry requirements: a computer with internet access, a text editor, and ftp program, and something to say.

This core is no less valid today than it was seven years ago, when I picked up the essay linked above on a printed flyer at 3am in a National Guard armory in Santa Rosa, CA (don't ask). What's changed is that a lot of people have gotten the message, and suddenly the phenomenal rate of growth in blogs has made every individual voice just a tiny bit quieter. Your options as an opinionated online-writing-guy are to accept that on the Internet, "everyone is famous for fifteen people", or to start paying attention to how you say what you need to say.

Enter RSS, XML, API's. William:

Any user of a public API runs the risk of entering a rather catch-22 position. The more useful the API is, the more dependent the user becomes on the APIs creator. In the case of Ebay sellers or Amazon affiliates this is often a mutually beneficial relationship, but also inherently unbalanced. The API user holds a position somewhat akin to a minor league baseball team or McDonald's franchisee, they are given the tools to run a successful operation, but are always beholden to the decisions of the parent organization. You can make a lot of money in one of those businesses, but you can't change the formula of the "beef" and you always run the risk of having your best prospects snatched away from you.

...

The real hook to the freedoms promised by the Web 2.0 disciples is that it requires nearly religious application of open standards (when of course it doesn't involve using a "public" API). The open standard is the control that enables the relinquishing of control. Data is not meant to circulate freely, its meant to circulate freely via the methods proscribed via an open standard.

In other words, there's a strong relationship between freedom and discipline. Freedom without discipline becomes the freedom to not reach our goals. Discipline is standards literacy. Literacy in the common culture and an ability to navigate it is a pre-requisite for effective communication, a point made by E.D. Hirsch in his work on Cultural Literacy. Hirsch's point about the communicative freedom gained by anticipating the cultural standards of your audience is directly applicable to the read/write web, where publishing your information under a technical format such as RSS and a legal format such as a Creative Commons license means that your work can fly faster, further, and affect a broader audience. This is a higher freedom afforded by self-discipline.

Blaze's paragraph on the risks of public API's can be understood as a criticism of cultural domination. In some cases, this domination is probably not something that can be overcome: Flickr's API grants access solely to data that users have chosen to host on Flickr. EBay's data is specific to the marketplace run by EBay. A more interesting example might come from information that is public domain, such as the Library of Congress' categorization of printed works, or the dense network of facts kept by Wikipedia. I can't speak for the former, but the latter information is made explicitly free under the GNU Free Documentation License. 3rd parties can and do duplicate the Wikipedia database for their own purposes, and Wikipedia ensures that this information is available in a coherent form (SQL database dumps) under a permissive license.

The benefits gained from a higher degree of web 2.0 professionalism are enormous, and they don't invalidate the easy-on promises of 1998.

Aug 16, 2005 5:25am

del.icio.us user flocks

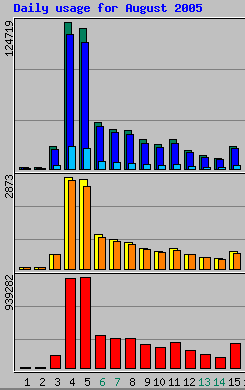

This is kind of neat:

Late last night, Slashdot linked to a Site Pro News article about 10 good CSS resources. Today, the article and its contents (WPDFD, Glish, the offical spec and others) absolutely freaking dominate Del.icio.us Popular, as seen in Vox Delicii, above. (If you're reading this more than a few days after I posted it, hit "Back one week/day" a few times after the jump)

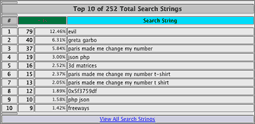

When I first posted Vox, I expected to see users "flock to stories linked from Slashdot or BoingBoing", but not swings as wild as this. Aside from the Slashdot effect, is there something more going on here, like a Del.icio.us Popular feedback effect? I certainly don't think it's a Vox feedback effect; here's the traffic stats since it was launched:

So here are two questions I would like to have answered, after some more data builds up:

- Is there a pattern to the kinds of sites that get the heaviest traffic? If they tend to be list or surveys, it might indicate that Del is being used as a meta-bookmarking service, and that highly-popular bookmarks are themselves pointers to the real content. Here, there seems to be a halo effect, from the Site Pro News to the linked sites, each of which has individually been around for ages.

- Where do the links come from? There are a few URL tastemakers such as Slashdot, BoingBoing, Waxy, or Kottke. but then there are these guys ("Del.icio.us users who bookmark helpful/timely URLs"), none of whose usernames I recognize. Are they top users because they are quick on the draw, or because other users look to them for interesting places?

Aug 14, 2005 12:17am

music industry worried about everything

music industry are big-time weenies.

News for music industry: nobody likes a whiner. Stop complaining and do something interesting so Apple stops eating your lunch.

Aug 11, 2005 4:35am

second-person shooter

Julian Oliver is investigating the second-person shooter format, what it might look and play like. In this take on the 2nd Person Perspective, you control yourself through the eyes of the bot, but you do not control the bot. Your eyes have effectively been switched. Naturally this makes action difficult when you aren't within the bot's field of view; thus both you and the bot (or other player) will need to work together, to destroy each other.

This reminds me of my senior year at UC Berkeley, perfecting my game of multiplayer deathmatch GoldenEye. This was one of the few games I had seen at that time which allowed simultaneous split-screen competitive play, and the secret to getting really good was to stop watching your own screen, and watch the other three. It really did start to seem like a second-person shooter after a while, when tense standoffs were resolved by carefully watching what the other players could see, and reacting accordingly.

The other split-screen game we liked was Mario Kart, where viewing the other screens didn't help quite so much.

Aug 10, 2005 6:20pm

vox delicii attention

Vox (announcement) has gotten a bit of very-appreciated attention since I posted it last week:

- Jim Downing, Smart Mobs

- Information Aesthetics

- About.com Web Search Blog Site of the Day

- Social Software Weblog

- Waxy Links

- Yahtzeen8.0

It's interested to note the difference in reactions between this version and the older In The News site. Is Del.icio.us Popular really a more interesting data set to graph than Google News?

Aug 10, 2005 6:20pm

rediscovering america

Three recent accusations of recognition masquerading as discovery:

- "Funny, but while reading the article my brain made several twitches. It's not so much that I disagree with the sentinence of the article (focusing on activites as compared to, uh, other human aspects, such as colors, shapes and taste, maybe), but I really disagree with the problem specified above; what the hell is the difference between designing for some users compared to all users? They are still both user-centred design!" (Alexander Johannesen on Don Norman)

- "I'm more troubled by the 'Get Real' philosophy. Not that it is in itself wrong, but I can see the horde of inexperienced managers and sloppy programmers who use this kind of rationale as an excuse for poor planning and bad execution." (Cedric, commenting on 9Rules in response to 37signals)

- "First we have to set aside the fact that Clay is now talking about free-text search, and not tagging. But, let's say he is talking about tagging. The system he's discussing already exists. It's called "postcoordinate indexing," and I mentioned it in a prior folksonomy post of mine. I guess that's another thing that's really bugging me. Clay acting as if he's discovered unchartered territory, when, really, it's been well-trod upon for awhile." (Peter Merholz on Clay Shirky)

I think what's happening is that all the accused parties above have absorbed the lessons of All Marketers Are Liars:

Successful marketers don't talk about features or even benefits. Instead, they tell a story. A story we want to believe.

The problem is that there are only so many untold-stories out there. Norman is rehashing the time-worn lessons of attentive, professional design, while making dubious distinctions between "human centered" and "activity centered". 37Signals is repackaging agile software development a.k.a extreme programming, warts and all. Clay Shirky is an academic, and academics are frequently required to take aggressive or contrarian positions to ensure attention, tenure, and publication. If you take the step of distilling your story to a one-sentence elevator pitch, there's a solid chance you'll be overlapping someone else's pitch. This is fine... if you're not also pretending to break new ground by conventiently omitting mention of where the idea may have originated.

Aug 4, 2005 7:13am

acd, hcd, etc

There's an interesting thread going on at SIGIA-L right now. After Don Norman's latest doe-eyed essay ("Human-Centered Design Considered Harmful") was posted, a mostly-illuminating discussion about the role of the designer in unintended consequences ensued. I was initially swayed by Don's essay, but I don't really have the same background in the relevant literature, and I've since come to view Norman's essay as somewhat deceptive.

From the article:

One basic philosophy of HCD is to listen to users, to take their complaints and critiques seriously. Yes, listening to customers is always wise, but acceding to their requests can lead to overly complex designs. Several major software companies, proud of their human-centered philosophy, suffer from this problem. Paradoxically, the best way to satisfy users is sometimes to ignore them.

This quickly started moving in the direct of Intelligent Design, a topic that needs its own Godwin's Law.

How many purposes does a telephone directory serve? I use one to jack up the height of my PC monitor. This is a function of characteristics of the technology (phone book), my desktop environment (desk/chair/monitor heights) and my need for a higher monitor (my physical capacities) and my ability to 'see' the phone book as a solution to my problem.

Ziya:

Again, these may be interesting use cases that are relevant to an anthropologist, as it were. But there's absolutely no reason for paid designers to go out of their way to consider your usage of a phone book as a monitor stand. The fact that you did doesn't inform the design process in a meaningful way.

Designed for driving nails into wood, (the hammer) has been designed with a simplicity that it affords many other uses (driving in wooden plugs, driving in screws, breaking bricks, cutting wire, opening bottles of beer). It's easy to imagine some designer being tasked with "design me a tool for driving in nails" and coming back with some device that does that, but has no affordances for anything else.

Meanwhile, O'Reilly posts topics for this year's Emerging Technologies conference, one of which is "Externalities, Affordances, and Unintended Consequences":

Affordances, usually associated with human-computer interaction, industrial design, and environmental psychology, is here seen as the flipside of externalities: one person's externality is another's affordance.

If I can get a proposal together in time, this should be a hell of a gathering. Antoher interesting topic is "Data as Platform":

How can data visualization use our cognitive preattention to assimilate data quickly, rather than just paging through a database view. Will remixing always be a hack, or are there ways to offer stable commercial services around remixed applications? In other words, what's the path from hack to product for remixing?

Aug 3, 2005 4:57pm

announcing vox delicii

I switched the data source for the News project from Google News to Del.icio.us Popular, and called the resulting piece Vox Delicii.

Here's why:

Since August 2004, Google's algorithm for determining news items worthy of inclusion on the "In The News" short list has become much more opaque, and a little less meaningful. Before that date, I believe it to have been based on objective popularity. George Bush was always in the news, and major news events such as the Richard Clarke / Condi Rice hearings in Spring 2004 were very well-represented. About a year ago, Google switched to an algorithm that appears to be based on the first derivative of popularity: major newsmakers such as George Bush no longer showed up, while lots of "flash in the pan" names did. It was a sensible decision on Google's part, but hell on anyone trying to do a visual analysis. The appearance of the heat map became significantly more chaotic, and it was more difficult to view patterns. In effect, the map above shows change over time, and doing so with information that is already showing change over time gives you a map of acceleration, which is more difficult to comprehend quickly and less interesting to watch.

Similarly, Google's process for determining what constitutes a "proper noun" is also opaque. When Sun and Microsoft settled their long-standing legal disputes, Sun Microsystems appeared here while Microsoft did not. I don't know why - maybe Google is only interested in pairs of capitalized words surrounded by non-capitalized words.

Meanwhile, Del.icio.us Popular is completely transparent. A quick glance at the list of popular items shows them to be organized by number of recent posts. A little digging shows that this number is probably based on the number of posts in the last 24 hours, so right away there's an objective method for understanding the source of the data.

In some ways, the Del.icio.us data is also more interesting for what it represents. The News information was based on news-room memes, and strongly influenced by the Associated Press and the general tendency for news sources to reprint each other's stories. Thus, it wasn't really graphing the mindshare of information deemed interesting by the general public, but by that of professional journalists and their employers and stockholders. Meanwhile, Del.icio.us popularity is a significantly more bottoms-up affair, tracking the oddball tastes of the geek set as it flocks to stories linked from Slashdot or BoingBoing. This is real, honest-to-goodness attention data and it should be fun to watch and analyze as the set grows.

Jul 28, 2005 5:53pm

podcasting

Maciej's Audioblogging Manifesto notwithstanding, this comment on the recent New York Times article "Podcasting Hits Mainstream" is on the money:

Here's a success story for you: I'm now enjoying podcasts so much that I've begun taking the bus to work instead of driving to work, which adds about 45 minutes of communting and listening time into my day. Podcasts reduced my dependence on foreign oil. :-)

(from 37s)

My dad just read Kunstler's Long Emergency, and is all fired up about peak oil. I think there may be an interesting confluence between efficient information-foraging behavior and environmental harmony. Kunstler's main argument seems to boil down to one of human reaction time: given that oil is a finite resource (the only real point of debate is when it will run out), the most important variable is the speed and intent of civilization's rection. If we wait for the market to respond to decreasing supply, we will have waited too long. The book argues that peak oil is a manageable situation if we work to eliminate suburban patterns of living now - move to the city, or become a gentleman farmer.

Meanwhile, the mechanics of attention and connectivity are reaching a point where it's possible to live a rapid transit urban lifestyle, and have more leisure time to read & learn. I do almost all of my reading on public transit, and podcasting only makes sense to me in a context where I'm away from my usual speedreading environment.

Jul 23, 2005 12:47am

painting in public

My good friend Kelly is in the Chronicle today:

"If you don't have a gallery show and you don't have a job, then you do live painting,'' he said as he kneeled on one knee and began to brush the scene with bright oranges and deep blues. "It's a way of getting gigs.'' Porter is one of many young artists who are venturing out from the quiet solitude of their studios to paint before a live audience. While people chat, mingle or dance, artists are turning a blank sheet of paper into art, often inspired by the music of a DJ or a live band.

Jul 22, 2005 5:18am

more notes on look and feel

Last month I posted a small group of application fragments that I think are particularly good designs to emulate for an upcoming revision of Reblog, an RSS aggregator application that I co-develop with Mike Frumin at Eyebeam.

I just finished the first draft of a redesign based on those notes. Feedback would be very much appreciated.

Jul 22, 2005 5:18am

@#$% moveon

Why am I getting mail from MoveOn yelping about this week's milquetoast Supreme Court nominee, while this is happening?

Jul 21, 2005 6:16pm

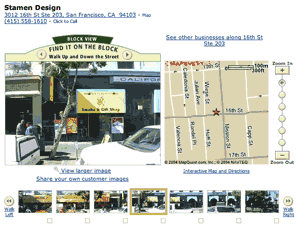

mappr in businessweek

Businessweek Online has an article by Rob Hof that details several web services mashups, including our project Mappr. The "slideshow" illustration is a Mappr screenshot, and we're #4 in the list.

I know this because I've been getting CPU load warning messages on my phone all morning, and our traffic has increased by something like 1000%.

Also featured are ChicagoCrime, HousingMaps, and Alan Taylor's Amazon Light. A9 is an interesting inclusion, because unlike the rest of these "unilateral collaborations", it's an official project from Amazon.

Jul 19, 2005 10:52pm

america, fuckyeah

This morning I took the Naturalization Oath of Allegiance and became a citizen of the United States, finally.

Jul 15, 2005 5:50pm

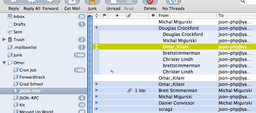

emulating gmail conversations in mail.app

I use Apple's Mail.app as an IMAP client. It means that I can also check my mail via Pine or Squirrelmail, on any of the machines I use regularly. This is good.

Gmail has an awesome design feature called conversations, where your inbox and outbox are merged into a single message stream, and you can see a complete back-and-forth exchange in one place, instead of having to rely on sane quoting practices or constantly switch between mailboxes. I don't use Gmail for a number of reasons, mostly inertia and control-freakishness. I do want the benefit of conversations, though.

One method I've thought of using to duplicate this feature is to generate procmail rules to automatically route all of my non-list inbox mail to a "conversations" mailbox, and then use that as my outbox as well. This could work, but it feels like a serious hack that could have negative consequences down the line. Since I use Mail.app as my primary mail client, I think that OS X Tiger's new "smart mailbox" feature offers a nice compromise, where I get to have a conversation view in just one of my mail-reading environments.

So, by setting up a smart mailbox with just two rules, I've got conversations and I don't need to switch to Gmail to get them. The rules are simply to include all mail that's either in my inbox or my sent folder, nothing more. This handles 90% of the cases where I might want to interleave messages, with very few drawbacks.

There are two big problems with this approach:

- It only works in Mail.app. I can deal with this, since the conversation view is not yet a crucial feature of my reading.

- Mail.app's search feature doesn't seem to work within smart mailboxes. This is probably a bug on Apple's part, or at least a lame design decision for the sake of efficiency. Maybe they'll fix it in a future release.

Jul 12, 2005 4:33pm

panoramic photos from new york

I just returned from my five-day trip to New York on Sunday. I was in a panoramic photo mood, so here are four composite images. The first two are from Eyebeam's Chelsea space, and the last two are from my friend's warehouse space in Williamsburg where I stayed.

Jul 9, 2005 12:20am

we spoke at flashforward

Darren and I presented an hour-long Ask The Experts session at at Flash Forward in New York this morning.

Slides from our talk are available for download. There are also BSD-licensed demo files that demonstrate Flash implementations of various map projections and some helpful linear algebra.

Jul 8, 2005 5:32am

media futures

Five articles I need to read very soon, in Seth's Transparent Bundles:

- Media Futures: AUTOMATA: "The most exciting new Internet companies are focused on lead generation, behavioral targeting, co-registration paths (aka coreg) and domain name brokerage. I seem to stumble every day across some new firm propping itself up on the shoulders of Google, Yahoo! or others to take advantage of a current wrinkle in an otherwise perfectly efficient landscape."

- Media Futures: ALGORITHM: "An Algorithm is a set of instructions or procedures for solving a problem. In the same way that computer scientists 50 years ago focused on the single problem of designing a general purpose computer, there is a similar focus in 2005 among leading Internet service architects: creating a social media computer that leverages user generated content to automate the production of commercial content."

- Media Futures: API: "...But now, in tackling the concept of API, even as it relates to something familiar like Internet Advertising, I am intimated by the history of professional, enterprise computing...."

- Media Futures: ALCHEMY: "In considering Alchemy as it relates to the Internet, I have been spending my time trying to reconstruct my college readings of Walter Benjamin, the beautifully melancholy German essayist from the 30's."

- Media Futures: ARBITRAGE (II, III, IV, V): "Arbitrage is the fifth and final part of the Media Futures series. It has taken me a full month to establish enough contexts for the word so as not to reduce its meaning."

Jul 5, 2005 1:17am

new york again

Darren and I are heading to New York tomorrow, where we will host our "Putting Data on the Map" Friday morning Ask-the-Experts session at this years' Flash Forward conference. Anything interesting going on I should know about?

Jul 4, 2005 11:28pm

we spoke at where 2.0

Eric and I presented our MoveOn Virtual Town Hall project at O'Reilly's Where 2.0 conference last week.

Our talk went well, the whole conference was great fun. Slides from our talk are available for download. Elizabeth Goodman posted notes on the conference, including our 15-minutes segment - ours is near the bottom of that page.

Jun 29, 2005 7:30am

notes on look and feel

I've been planning a visual overhaul of Reblog's stylesheets. They are starting to look puffy and dated, and its time to cut out a little fat. I've collected a small group of application fragments that I think are particularly good designs to emulate.

Jun 28, 2005 2:18am

we are speaking at where 2.0

Eric and I are presenting our MoveOn Virtual Town Hall project at O'Reilly's Where 2.0 conference this week.

Stamen collaborated with MoveOn.org to create a live, map-based application which allows thousands of people to simultaneously communicate with MoveOn staff, featured speakers and one another. The project encourages a visceral sense of "being there" among participants, provides an immediate visual picture of the spread of MoveOn membership, and allows that community to connect with itself in a powerful new way. Upwards of 50,000 members have participated in these online town hall meetings simultaneously. Migurski and Rodenbeck will describe the nature of collaborative engagement that live online maps can provide.

Jun 26, 2005 11:16pm

razorbern, amazing photographer

Razorbern's photos on Flickr have been among my absolute favorites over the past few weeks. Here are a few that I expecially like:

Jun 25, 2005 9:46pm

GSV released

I've taken my previously-mentioned giant-ass image viewer, renamed it to "GSV", and cleaned it up a bit for easier distribution under a BSD license.

Features:

- Allows viewing of extremely large images on the web, by segmenting them into smaller tiles and showing only what will fit on the screen.

- Complete visual control through vanilla CSS.

- No server-side technology required.

- Developed in Mozilla and Safari, tested in Internet Explorer, reportedly works in Opera.

- Thanks to behaviour.js, very little actual Javascript knowledge required.

- Includes Python script for segmenting images.

Jun 24, 2005 6:15am

reblg, again

Marc Canter posted a lengthy reply to my previous post about reblg. It's a great point-by-point answer, and has been clarified somewhat through conversation as well. He really has a grand vision of a microcontent mesh, and it's both aspirational and achievable, so I'm into it. There are still a few difficulties I'm having with it, though, mostly of the "metacrap" variety.

Overall I'm really into the possibilities here, because I think that microformats have great potential to pick up some of the sem-web slack in a way that's incrementally reachable.

Marc lists a few benefits for content creators in addition to the ones I mentioned. One is:

It's imperative that the structure of the microformat or micro-content be maintained. This is the 'challange' that RSS has put us into - services like UpComing.org enable subscription to one's personal events, but the structure of the event is lost. That's gotta stop. We NEED to maintain the structure of stuctured content!

Okay, yes. But right now I subscribe to an iCal version of my Upcoming.org events, and they appear in my calendar app along with all my other events and appointments. That's not just structure, it's structure in a format I can use directly. hCal isn't there just yet, and it's the easiest one of the microformats to render unambiguously. Imagine hReview butting up against straw man #7.

Something else he says, later:

Going back to the islands of functionality..... For us (as an industry) to equal Longhorn and whatever Apple comes up with - it's imperative that we come up with techniques to implement the mesh. A mesh that inter-connects tools togfether. That's the infrastructure we're proposing.

I'm curious why Google isn't on the list with Apple and Microsoft. I view them as being in the best possible position to provide the kinds of aggregation services that microformats need, and the least likely to cooperate openly. Just as tags are useless without a Del.icio.us or Flickr to provide a comparative context, semantic microcontent requires that like be compared with like for it to make any sense. A decent search engine that was microcontent-aware would certainly help, and this seems to be exactly what Marc's proposing.

I bet it would take Google a month tops to implement the expected response for a search like "format:hcal san francisco skinny puppy shows".

Marc pledges not to commericialize the data, which is a bit of a bummer to me. Having a plan for commercialization up-front means that you're prepared for the eventuality (because it always comes up), and you're talking about it from day one so nobody from the information-wants-to-be-free crowd freaks out down the line.

Anyway, it's a lot to digest. Especially as it relates to ReBlog, because there's so much overlap it's scary. He's right that we're converging on the same spot from different direction - ReBlg has the big picture with not a lot of proof-of-concept, while ReBlog has been working pretty well for a year now in the specific domain of RSS content curation. It's a few plug-ins and bookmarklets away from realizing half this stuff.

Jun 23, 2005 7:10pm

cameron's blog survey

I took Cameron Marlow's weblog survey so he can finish his PhD, and you should too.

Jun 22, 2005 8:49pm

continuous partial attention

These are excerpts from Nat's notes on Linda Stone's Supernova 2005 talk on Attention.

I see twenty year cycles. Coming through in the cycles is a tension between collective and individual, and our tendency to take set of beliefs to extreme then it fails us and we seek the opposite.

...

1985-2005: Network center of gravity. Trust network intelligence. Scan for opportunity. Continuous partial attention is a post-multitasking adaptive behaviour. Being connected makes us feel alive. ADD is a dysfunctional variant of continuous partial attention. Continuous partial attention isn't motivated by productivity, it's motivated by being connected. MySpace, Friendster, where quantity of connections desirable may make us feel connected, but lack of meaning underscores how promiscuous and how empty this way of life made us feel. Dan Gould: "I quit every social network I was on so I could have dinner with people."

...