tecznotes

Michal Migurski's notebook, listening post, and soapbox. Subscribe to ![]() this blog.

Check out the rest of my site as well.

this blog.

Check out the rest of my site as well.

Feb 2, 2010 6:18am

the hose drawer

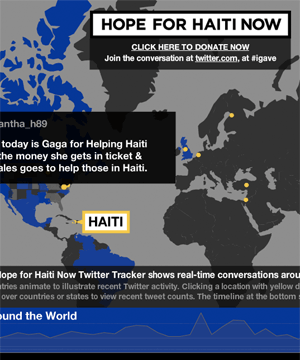

Last week, Stamen was involved in the MTV Hope For Haiti Now telethon. It was apparently the largest such fundraising effort in history, pulling down over $60 million in what the New York Times called "a study in carefully muted star power".

So it was, you know, a pretty intense event to be a part of. With about a week or so of lead time, we put together an interactive map showing moderated Twitter messages connected to the live event and relief for Haiti generally. It was also part of the post-show on television.

It looks like this:

Sha and I traveled to Los Angeles to work out last minute kinks and watch over the project. Aaron was up here, carefully seeing to the smooth operation of the engine driving the Twitter collection process for the duration. Much of the office pulled together to crank out this project on pretty much no notice, and it was an inspiring and energetic effort.

I want to talk about that engine, though - it's occupied most of my headspace since we all got back from the holidays, headspace ordinarily full of geography and cartography. After dabbling in the consumption and development of real-time API's for a few years, we've started working with the high-volume Twitter stream this year. Back in 2007, Kellan Elliot-McCrea and Blaine Cook opened our eyes to the possibilities of streaming APIs with their series of Social Software For Robots talks. We poked at XMPP and other persistent technologies a few times, but what really whet the appetite was a project we did with MTV for the 2009 Video Music Awards, our first large-scale public project connected to the Twitter streaming API. The VMA project was a collaboration with social media monitoring company Radian6, who handled the backend bits. Given Stamen's development and design focus it was only going to be a matter of time before we started pawing at that backend technology ourselves.

The consumption and moderation system we have developed was christened "Hose Drawer" by Aaron, a name that neatly encapsulates two strands in our recent interests:

- "Hose" draws on Blaine's joking references to "drinking from a fire hose" about live streams of Twitter's real-time database, and now graces the official name of the service itself. The complete feed is called the fire hose, while a limited feed for casual users is the garden hose. I've heard that the names persist internally, with names like "hose bird cluster", referring to Twitter's avian mascot, among others.

- "Drawer" is a nod to last year's Tile Drawer, an EC2 virtual machine image for rendering OpenStreetMap cartography. We're thinking about a future where this kind of functionality is as much a piece of furniture as a PC, albeit one that can be created out of thin air and destroyed just as quickly. With a growing selection of infrastructure products, Amazon's Web Services are making it possible for us to develop services that act like products, materialized by small python scripts that bootstrap themselves into multi-machine clusters.

This is the naming convention you get from living the pun-driven life*.

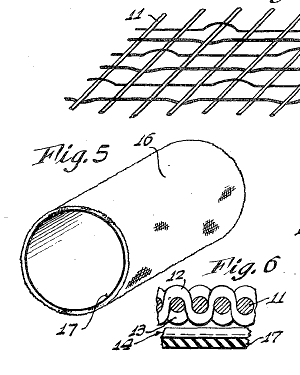

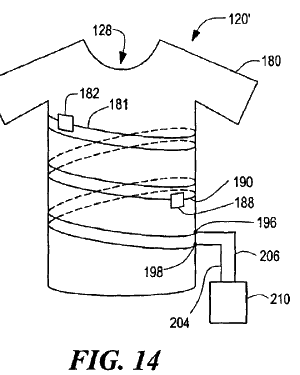

The firehose offers a continuous flow of data, yet requires us to break that flow down into discrete chunks. I've been thinking a bit about this process in our work since Tiles Then, Flows Now, my 2008 Design Engaged talk about map tiles and continuity, so much so that I see the process of breaking and reforming continuity everywhere around me. I flew several times last month, and each time I passed through security I thought about the check-in process as an elaborate map/reduce implementation, atomizing a stream of passengers into packets of shoes, laptops, jackets, bags and bodies. Numerous fascinating patents cover the splitting up of a steady flow, from t-shirts cut from unending tubular knit fabrics, to continuously-cast steel to simulated egg yolks sliced from unbroken cylinders.

Scott Bilas, whose Continuous World Of Dungeon Siege paper I drew on heavily for DE 2008, describes the challenges of writing a streaming system in the world of video game design:

The core problem that we had so much trouble with is that, with our smoothly continuous world, there are no fixed places in the world to periodically destroy everything in memory and start fresh. There are no standard entry/exit points from which to hang scripted events to initialize or shut down various logical systems for plot advancement, flag checking, etc. There is no single load screen to fill memory with all the objects required for the entire map, or save off the previous map's state for reload on a later visit to that area. In short, not only is the world continuous, but the logic must be continuous as well!

The pattern we see here is to keep crises small and frequent, as Ed Catmull of Pixar says in an excellent recent talk. When describing the difficulty Pixar's artists had with reviews ("it's not ready for you to look at"), he realized that the only way to break through resistance to reviews was to increase the frequency until no one could reasonably expect to be finished in time for theirs. The point was to gauge work in motion, not work at rest. "So often that you won't even notice it," said Elwood Blues.

I'm interested to see where we can take this product-that-isn't-a-product. We're going to be using it for an upcoming high-profile sports broadcasting client (you'll hear more about this in another two weeks), and the stress tests administered by the Haiti telethon have shown exactly where we need to do more work. Oddly the overall performance was great, but we found ourselves occasionally needing to go tweet diving through the database, looking for specific messages that were good for television. This ability to reach in a meddle with the guts, place yourself on a calm island in the middle of the stream, rewind the tape and alter the flow, is the next type of control we're experimenting with.

Comments

Sorry, no new comments on old posts.