tecznotes

Michal Migurski's notebook, listening post, and soapbox. Subscribe to ![]() this blog.

Check out the rest of my site as well.

this blog.

Check out the rest of my site as well.

Mar 16, 2013 3:24am

documentation for tiled vectors in mapnik

I’ve documented the tiled MVT layers on offer (currently two), possibly underexplained yesterday.

Mar 15, 2013 6:09am

the liberty of postgreslessness: tiled vectors in mapnik

(tl;dr: VecTiles)

Data is one of OpenStreetMap’s biggest pain points. The latest planet file is 27GB, and getting OSM into the Postgres database can be a long and winding road.

Vectored tiles offer a way forward, and Mapnik is growing features to support them. Matthew Kenny writes about them on his blog from a Polymaps-like in-browser rendering angle, but I’m also thinking about how vectors could be used with Mapnik directly to render bitmaps without needing direct access to a spatial database.

Three parts are needed for this to work:

- A server with data that can render vector tiles.

- A datasource for Mapnik to request and assemble tiles.

- A file format to tie them together.

MVT (Mapnik Vector Tiles) is my first attempt at a sensible file format. In Polymaps and my WebGL mapping experiments I’ve been using GeoJSON, but it has a few disadvantages for use in Mapnik.

One problem that GeoJSON doesn’t suffer from is size: when simplified at the database level, truncated to six digits of floating point precision at the JSON encoding level and gzipped at the HTTP/application level GeoJSON shrinks surprisingly well. At zoom level 14, the data tiles for San Francisco’s artisinal hipster district take up almost 700KB, but gzip down to just 45KB. At zoom level 16, those same data tiles require 1.16MB but gzip down to 76KB. That’s a crazy 94% drop in size on the wire, not out of line with what I’ve been seeing in practice.

GeoJSON’s big disadvantage is the reëncoding work necessary at the client and server ends. Shapely offers quick convenience functions for converting to and from GeoJSON, but you’re still spending cycles reprojecting between geographic and mercator coordinates, and parsing large slugs of GeoJSON is needlessly slow.

Since Mapnik 2.1, there has been a Python Datasource plugin that allows you to write data sources in the Python programming language, and it wants its data as pairs of WKB and simple dictionaries of feature properties. MVT keeps its data in binary format from end-to-end and never leaves the spherical mercator projection, so most of the data-shuffling overhead is skipped entirely. MVT uses zlib internally to compress data, and I reduce the floating point precision of all geometries using approximate_wkb to make them more shrinkable.

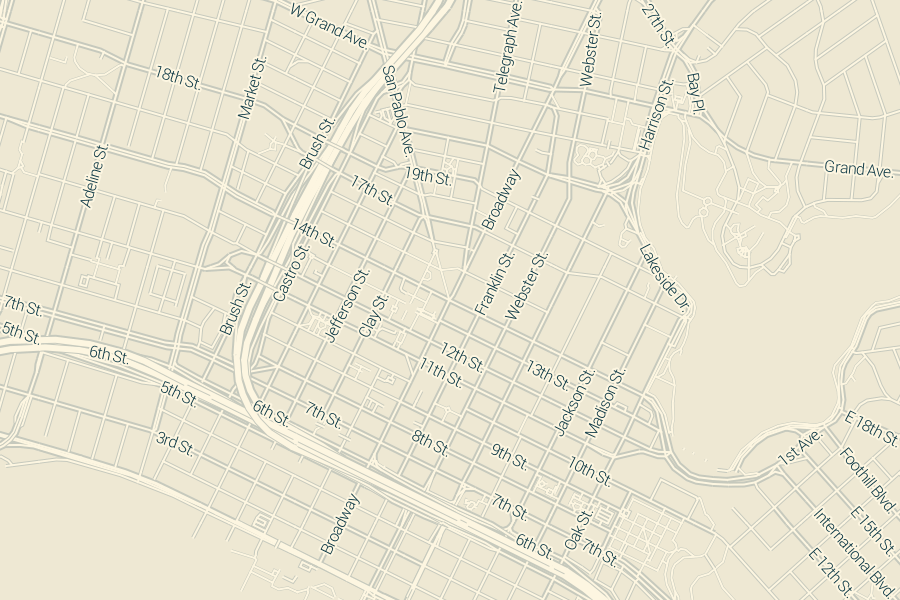

The performance I’ve seen has been decent, all things considered. This map of downtown Oakland rendered in 1.5 seconds, and two-thirds of that time was spent waiting on network latency:

On the server side, I’m using the new TileStache VecTiles.Provider to generate tiles. It makes both GeoJSON and MVT. It’s been especially interesting thinking through “where” cartography lives in a setup like this. I spoke with Dennis McClendon when I visited Chicago recently, and he pointed out how there’s now a divide between data and rendering in digital cartography that doesn’t exist for him. Traditionally, making a map meant collecting and curating data. Only with the distributed labor force of the OpenStreetMap community and the effort of projects like Mapnik can you frame cartography separately from data. Vector tiles blur this distinction somewhat, because the increased distance and narrower bandwidth between renderer and data source forces some visual decisions to be made at the data level. Tiles for drawing lines vs. labels, for example, are separate: lines can be clipped at tile edges and flags for bridges, tunnels and physical layering must be preserved, while labels demand unclipped geometries and simpler lines.

A portion of the live TileStache configuration looks like this, with linked Postgres queries.

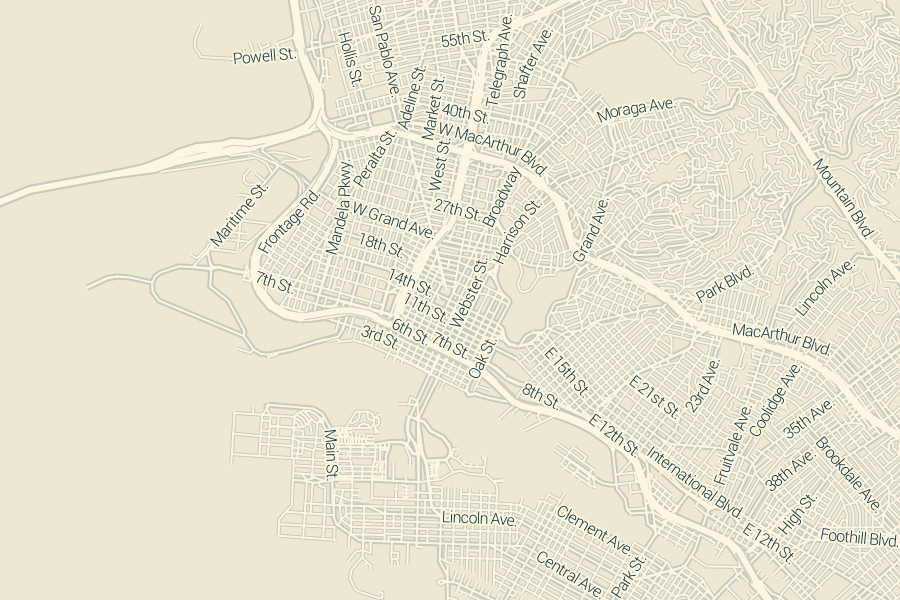

The division of linework and labels into separate layers yields this rendering at zoom 15, completed in 2.7 seconds with about 1.1 seconds of network overhead:

Back on the client side, the TileStache VecTiles.Datasource provides data retrieval, sorting and rendering capability to Mapnik. I’ve tested it with Mapnik XML stylesheets, Carto on the command line and Cascadenik and it’s worked flawlessly everywhere. I’ve not tried it with Tile Mill. The project MML file contains simple URL templates in the datasource configuration, while the style MSS is essentially unchanged from a typical Mapnik project.

The Mapnik Python Datasource API expects data in the projection of the final rendering, delivered as WKB geometry and simple dictionaries with strings for keys (not unicode objects, a Mapnik gotcha). Inside the zlib-compressed payload of an MVT tile, this data is provided almost without change. For unclipped geometries, unique values are determined by value using the whole of the WKB representation, and there’s not a point anywhere in the client process where the WKB is decoded or parsed until it hits Mapnik.

This zoom 13 rendering still demands 1.1 seconds of network overhead, plus 2.7 seconds of actual CPU work to render all the small streets:

I suspect that the network overhead would drop substantially if I allowed the client Datasource to request much larger tiles, either 512×512 or 1024×1024 at a time instead of the traditional 256×256. This is quite easy to do in code but the expectations around line simplification and correct behavior at each zoom level are challenging. I’m struggling to find the balance here between my own fluency in tile coordinates and something trivially usable by cartographers and Tile Mill users hoping to skip the database grind.

Doing this all over HTTP has huge advantages, despite the network overhead. For example, the tiles used in the examples above are all requested from tile.openstreetmap.us, which happens to make use of Fastly’s caching CDN to ease traffic load on the OSM-US server. Running your own caching proxy to bring it all closer seems more trivial than learning how to set up Postgres.

Overall, I think VecTiles and MVT offer a compelling way to use Mapnik or Tile Mill with no local datastore. The test tiles I’m working with are served from OSM-US Foundation supported hardware, where Ian Dees and others have ensured an up-to-date database. It should be possible to take advantage of OpenStreetMap for cartography without learning to be a database hero.

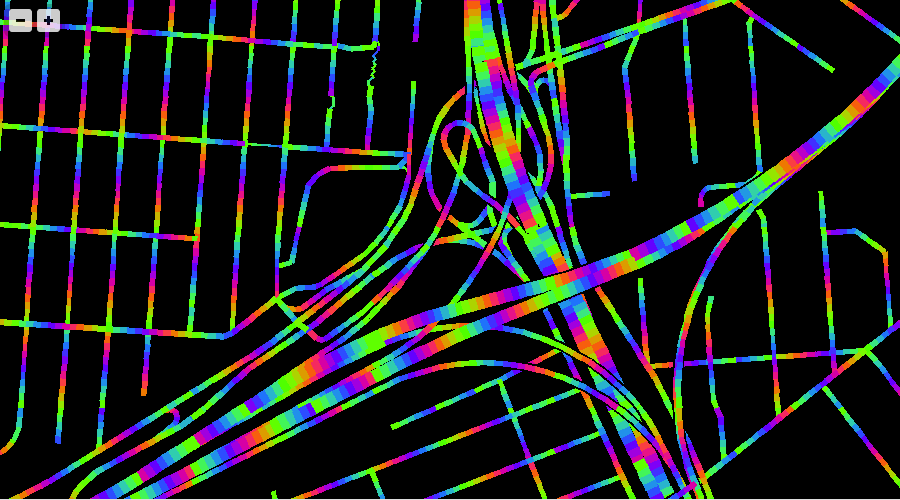

Since you’ve read this far, here’s the Mario Kart Rainbow Road edition of WebGL maps that uses these tiles: