tecznotes

Michal Migurski's notebook, listening post, and soapbox. Subscribe to ![]() this blog.

Check out the rest of my site as well.

this blog.

Check out the rest of my site as well.

Jan 28, 2014 4:41am

bike seven: building a cargo bike

This Nishiki Colorado is the seventh bicycle I’ve owned in my life (I’m not counting the blown-plastic bigwheel I rode in Ansonia, CT in 1982). In an effort to further stave off car ownership, I’ve outfitted it as a cargo bike using an Xtracycle FreeRadical X1:

I mostly use it for food shopping. With the cargo bags on either side, I can move a four-bag load of groceries the 2.3 mile distance between my house and the grocery store.

The conversion was relatively simple.

The Nishiki Colorado base came from Craigslist, where late 80s/early 90s mountain bikes are currently easy to find. This one was $90, in excellent condition. I had expected to get a beater bike and just use the frame, but the components on the bike were all in great shape. The wheels did require a bit of spoke adjustment, something that I chose to learn to do myself instead of asking a bike store to quickly do it. I bought the FreeRadical at Tip Top, a bike store near my house in North Oakland, for $500.

The FreeRadical extension attaches to the frame at three points: one bolt attaches near the kickstand mounting plate just behind the bottom bracket, and two additional bolts fit into the dropouts where the wheel normally connects. The connection is strong and stiff, and makes a single extra-long frame for the bike as a whole. The cargo extension has its own additional dropouts, and the rear wheel attaches to those about 18” back from where it would normally sit. Xtracycle includes detailed instructions and there are a few adjustments for different types of base bikes.

There were three challenging steps to completing the connection to the rear wheel: moving the rear brake, moving the rear derailleur, and lengthening the chain.

The FreeRadical requires a linear-pull brake, different from the center-pull cantilever brakes that might typically come with this kind of bike. The linear-pull has to be bought separately, and uses a slightly different length of cable to engage the brake. The bike store advised me to get a new brake lever to account for the difference in length, but I found that the “mushy” feel with the old lever was tolerable so I left it alone.

The rear derailleur is a fiddly part, and since it’s so much further to the back of this bike I also had to use an extra-long cable to attach it. The longer cables are usually marketed to tandem bike owners, and Tip Top had it included with the FreeRadical in the box. Adjusting a derailleur can be a pain, but fortunately the ones produced in past ~25 years are relatively standard and easy to work with. Once I had it all connected and set the high and low limits, it took about fifteen minutes of riding up and down the street with a screwdriver in my hand, stopping every few feet to adjust the derailleur indexing until it all clicked into place.

Lengthening the chain requires a bike chain tool, and you have to buy two normal-length chains and connect them end-to-end.

Here’s what the bike looked like when I first brought it home:

I’m happy with the goofy blue & yellow color, and I’m glad that the new brake and derailleur placement still let me keep the color-matched blue cable housings up at the front. I’ve taken it on BART once, and I didn’t attempt to move it up the stairs and used the station elevators instead. I got a few weird looks and comments on the bike.

Jan 21, 2014 6:06am

blog all video timecodes: how buildings learn, part 3

Following a tip from Jason Kottke, we’ve been watching the six-part BBC series How Buildings Learn, narrated by Stewart Brand and based on his book. The third part, Built For Change, should be required viewing for any designer or developer. It’s rich with advice now quietly part of the software canon. This includes several cameos from architect Christopher Alexander, famous to the software world as the inspiration for the concept of design patterns. What’s most interesting here is the explicit relationship between longevity and lean, repair-like methods.

“All buildings are predictions; all predictions are wrong.”

On San Francisco’s numerous victorians, including the famous painted ladies: “Right angles, flat walls, and simple pitched roofs make them easy to adapt and maintain.”

Montréal’s McGill University Next Home project is an example of homes designed for modification. They’re fairly standard-looking row houses, “easily modified, customized as finances allow … easily adaptible, flexible, and most all affordable.” Each building is sold half-finished, with new owners expected to finish parts of the house beyond the core living area:

Once you get comfortable with the idea that a building is never really finished, then it comes naturally to build for flexibility. For instance, in a new building you can have some areas that are left half-completed, the way attics used to be.

Professor Avi Friedman, on revisiting the homes:

5,000 homes have been built in Montréal since the idea was first introduced. When we came back to the homes three years later, to our surprise and joy we found that 92% of all the buyers changed, modified, improved the homes.

The theme of architects returning to a completed project, to check on how it’s been used and learn from its post-construction history, is one that Brand returns to many times. Lots of congruence with standard software UX practice there.

On conservatism:

Most buildings that are designed by architects to look radical, wind up pretty conservative. A better approach is to start conservative and sensible, and then let the building become radical by being responsive.

The episode finishes up with architect John Abrams talking about his company’s fascinating practice of meticulously documenting every home they construct in photographs, prior to finishing. The photos show plumbing, framing, electrical, and other construction details prior to the addition of drywall:

We started this process years ago. What happens is, we take those photographs, put them in a book, and that book stays with the house. Presumably forever. It becomes very useful to the carpenters and tradespeople, but then it increases in value over time … there’s this document, it’s like x-ray vision into the walls.

“A building is not something you finish, a building is something you start.”

Jan 20, 2014 7:26am

talk notes, urban airship speaker series

Nolan Caudill extended an invitation to come speak at the Urban Airship Morning Liftoff Speaker Series last week, and I was happy to have the chance to put into words and pictures some of what I’ve been working on at Code for America. Our amazing class of 2014 fellows just started, and we’ve been rushing to fill their heads with stuff before sending them off to their city residencies for the month of February. I’m so excited about the current class; it’s the first time I’ve been here for the full year (I joined in May of last year).

The notes below are a partial summary of my talk from Friday morning. I adapted some elements from my November keynote at Citycamp Oakland, and some thoughts that I previously put into my keynote at TransportationCamp West.

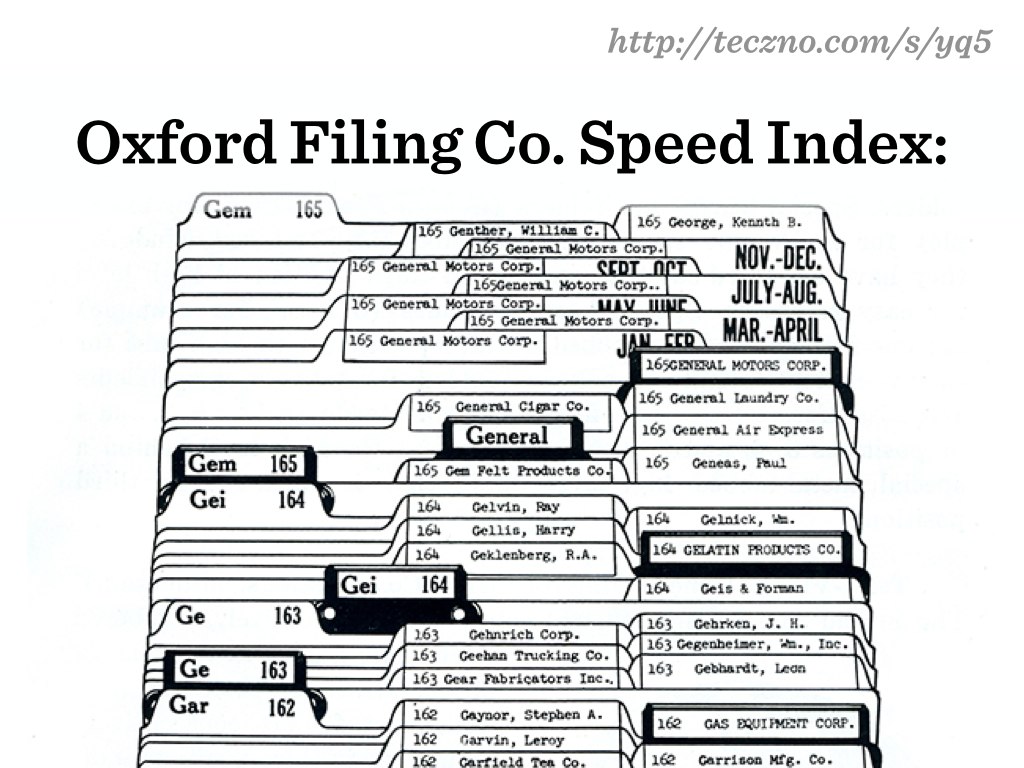

Coding with government is special. Government operates at longer time scales than startups, and remembers a time when information technology used to mean paper.

Paper is easy to mock. Jon Stewart has been taking the Veterans Administration to task for months now, about their massive backlog of outstanding medical claims. The floor under these boxes is supposedly having structural problems:

Paper is easy to mock, but it takes people with hearts and brains to move it around, and often the human processes around paper form communities of practice to get things done better and more effectively than simply computerizing everything.

A useful question, then, is how our current code and technology choices at CfA might better preserve what’s good about the old while still introducing the innovation of the new?

Three important technical goals can help: resiliency to environments and expectations, error-friendliness for developers and users, and sustainability for makers and caretakers.

I’m thinking of resiliency to two factors: environments, and expectations. I love this photo of Carlos Valenciano shutting down Kansas City’s IBM mainframe, the same one that he turned on almost two decades ago:

The technology environments used by local governments are changing, from heavy mainframes to smaller, more nimble systems, often open source like the work we do at CfA. This year’s project with Louisville, the Jail Population Management Dashboard, was developed with portability across environments in mind. Shaunak, Marcin, and Laura developed a supple and gorgeous visualization environment, and backed it with a conservative, cleanly-documented backend layer written in PHP and SQL Server. Those are two decidedly un-sexy technologies, but the bright seam between front-end and back-end allowed Denver to adapt the dashboard to their own purposes with their own compliant backend. Resiliency.

Adapting to new expectations means riding the border between commerce and culture. I’m not yet comfortable with the right wording here, but CfA has benefited greatly from the positive perception of helpful techies planning civic hackathons. Can this perception last? Or isn’t it our mission to work on stuff that matters so we can push the work to the unsexy societal gaps where needs are unaddressed?

Error-friendliness is term I’ve borrowed from Ezio Manzini’s paper on suboptimality: “low specialisation or ‘suboptimality’, regarding a certain purpose, is a strategic valuable property for biological organisms, summoned to confront themselves, in time, with potential environmental changes.” Developer error friendliness is permission to deliver work that’s suboptimal in strategic ways that allow it to be touched, tweaked, and modified piecemeal over time. The enemy here is premature efficiency: the Don’t Repeat Yourself or Convention Over Configuration principles, when taken to extremes, are two ways in which we rob ourselves of clues to intent in code.

David Edgerton’s Shock Of The Old offers additional hints:

As the British naval officer in charge of ship construction and maintenance in the 1920’s put it: “repair work has no connection with mass-production.”

City information technology is more like repair work than mass-production. Code that addresses user needs must be fit to those needs, and there’s rarely a mass-market product to be found that can do this without adjustment and tweaks. This similarity to repair is an advantage: the approach is more craft-like, more custom, and therefore carries with it more opportunities for learning in the agile/lean sense. For what it’s worth, this is also a summary of what I think went wrong with the 2013 relaunch of Healthcare.gov: a commodity-style purchasing mentality applied to a totally new service, with no opportunity for experimentation and learning hiding in that massive price tag.

User error-friendliness is a related concept. Try searching for a birth certificate in our Oakland public records application RecordTrac, and you’ll be immediately pointed toward the County of Alameda's Clerk-Recorder's Office (510-272-6362 or acgov.org/auditor/clerk) for this commonly-encountered mistake. Small-scale, repair-like moves continually nudging users toward the things they actually want.

Sustainability is about who can modify a system, and who can verify that it’s working. Github and “learn to code” are necessary but not sufficient answers to this problem, and it’s not enough for us to simply throw code over the fence when we’re done. Care must be taken that README documents say the right things to the right audiences, that basic architectural principles of the web are honored, and that we’re not landing anyone in hot water by delivering indecipherable weird (a.k.a. “Node for America”).

We’ve built some minimalist instance monitoring services and adapted a repository testing app to help make this last part a reality, and we’ve got a full plate of work planned for this year to help our fellows do their best work.

Jan 10, 2014 6:12am

john mcphee on structure

John McPhee’s Structure made a big impression on me when I read it last year, so I’m happy to see it out from behind the New Yorker paywall. It’s a longform investigation of thought process and order and tools and approach to writing, and I’ve nicked some of the scissors & paper ideas for my own organization.

On crisis:

The picnic-table crisis came along toward the end of my second year as a New Yorker staff writer (a euphemistic term that means unsalaried freelance close to the magazine). In some twenty months, I had submitted half a dozen pieces, short and long, and the editor, William Shawn, had bought them all. You would think that by then I would have developed some confidence in writing a new story, but I hadn’t, and never would. To lack confidence at the outset seems rational to me. It doesn’t matter that something you’ve done before worked out well. Your last piece is never going to write your next one for you. Square 1 does not become Square 2, just Square 1 squared and cubed. At last it occurred to me that Fred Brown, a seventy-nine-year-old Pine Barrens native, who lived in a shanty in the heart of the forest, had had some connection or other to at least three-quarters of those Pine Barrens topics whose miscellaneity was giving me writer’s block. I could introduce him as I first encountered him when I crossed his floorless vestibule—“Come in. Come in. Come on the hell in”—and then describe our many wanderings around the woods together, each theme coming up as something touched upon it. After what turned out to be about thirty thousand words, the rest could take care of itself. Obvious as it had not seemed, this organizing principle gave me a sense of a nearly complete structure, and I got off the table.

On order:

When I was through studying, separating, defining, and coding the whole body of notes, I had thirty-six three-by-five cards, each with two or three code words representing a component of the story. All I had to do was put them in order. What order? An essential part of my office furniture in those years was a standard sheet of plywood—thirty-two square feet—on two sawhorses. I strewed the cards face up on the plywood. The anchored segments would be easy to arrange, but the free-floating ones would make the piece. I didn’t stare at those cards for two weeks, but I kept an eye on them all afternoon. Finally, I found myself looking back and forth between two cards. One said “Alpinist.” The other said “Upset Rapid.” “Alpinist” could go anywhere. “Upset Rapid” had to be where it belonged in the journey on the river. I put the two cards side by side, “Upset Rapid” to the left. Gradually, the thirty-four other cards assembled around them until what had been strewn all over the plywood was now in neat rows. Nothing in that arrangement changed across the many months of writing.

On attention:

So I always rolled the platen and left blank space after each item to accommodate the scissors that were fundamental to my advanced methodology. After reading and rereading the typed notes and then developing the structure and then coding the notes accordingly in the margins and then photocopying the whole of it, I would go at the copied set with the scissors, cutting each sheet into slivers of varying size. If the structure had, say, thirty parts, the slivers would end up in thirty piles that would be put into thirty manila folders. One after another, in the course of writing, I would spill out the sets of slivers, arrange them ladderlike on a card table, and refer to them as I manipulated the Underwood. If this sounds mechanical, its effect was absolutely the reverse. If the contents of the seventh folder were before me, the contents of twenty-nine other folders were out of sight. Every organizational aspect was behind me. The procedure eliminated nearly all distraction and concentrated only the material I had to deal with in a given day or week. It painted me into a corner, yes, but in doing so it freed me to write.

On software:

He listened to the whole process from pocket notebooks to coded slices of paper, then mentioned a text editor called Kedit, citing its exceptional capabilities in sorting. Kedit (pronounced “kay-edit”), a product of the Mansfield Software Group, is the only text editor I have ever used. I have never used a word processor. Kedit did not paginate, italicize, approve of spelling, or screw around with headers, wysiwygs, thesauruses, dictionaries, footnotes, or Sanskrit fonts. Instead, Howard wrote programs to run with Kedit in imitation of the way I had gone about things for two and a half decades. … Howard thought the computer should be adapted to the individual and not the other way around. One size fits one. The programs he wrote for me were molded like clay to my requirements—an appealing approach to anything called an editor.

Jan 5, 2014 8:02pm

blog all oft-played tracks V

The thing about not blogging for six months is that you lose your sense of what’s worth talking about. For the most part, I’ve been using Twitter, Pinboard, Tumblr, and Code for America’s own mailing lists to let out my daily allowance of words and pictures. However, I remain a believer in Jeremy Keith’s suggestion that in a universe of pre-baked publishing platforms, there’s no “more disruptive act than choosing to publish on your own website,” so here I am making an effort to write on this site again in 2014.

This music:

- made its way to iTunes in 2013,

- and got listened to a lot.

I’ve made these for the past four years, lately. Also: everything as an .m3u playlist.

1. Mark Reeve: Planet Green

One track from Cocoon’s excellent compilation series.

2. Trust: Candy Walls

Came to me by way of Sha’s mixtapes for friends.

3. Laika: If You Miss (Laika Virgin Mix)

Spookiest, most earworming thing on 1995 Macro Dub Infection compilation.

4. Hot Chip: These Chains

5. Metallica: The Four Horsemen

From when Metallica was still playing screechy speed metal in the East Bay, 1983.

6. Burial: Truant

Loner might be a better track, but this one made the 2013 number-of-listens cut.

7. Fuck Buttons: The Lisbon Maru

Thanks Nelson for the recommendation. Surf Solar is also excellent, like a bad dream.

8. Grimes: Oblivion

9. John Tejada: Stabilizer (Original Mix)

Perfectly reminiscent of Orbital.

10. T-Power: Elemental

1994-ish proto-jungle.