tecznotes

Michal Migurski's notebook, listening post, and soapbox. Subscribe to ![]() this blog.

Check out the rest of my site as well.

this blog.

Check out the rest of my site as well.

Mar 28, 2011 8:12am

walking papers cheaply

Where was I.

Since launching Walking Papers almost two years ago, we’ve seen a volume of activity and attention for the site that we never quite anticipated, not to mention the thing where it’s currently living at the Art Institute Of Chicago.

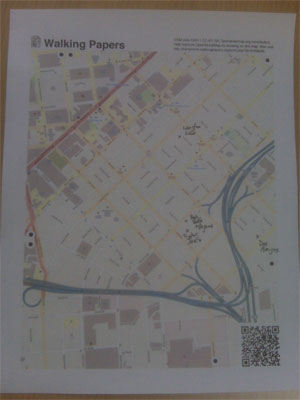

(photo by Nate Kelso)

A thing that’s always bothered me is that for all the whizz-bangery of the SIFT technique for parsing images, we’ve had about a 50/50 success ratio on the scanning process. A lot of this is attributable to low-quality scans and blurry QR codes, but that just serves to underscore the issue that Walking Papers should be substantially more resilient in the face of unpredictable input. More to the point, it should work with the kinds of equipment that our surprise crisis response user base has available: digital cameras and phonecams. They fit in your pocket and everyone’s got one, unlike a scanner. Patrick Meier from Ushahidi and Todd Huffman have been particularly badgering me on this point.

(tl;dr: help test a new version of Walking Papers)

Fortunately, around the same time I was looking for answers I encountered a set of Sam Roweis’s presentation slides from the Astrometry team: Making The Sky Searchable (Fast Geometric Hashing For Automated Astronomy). Bingo!

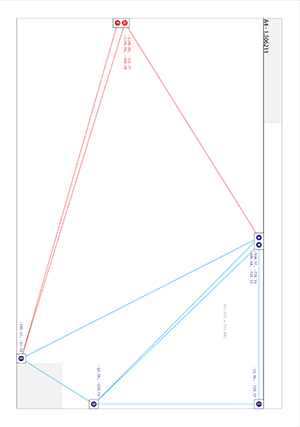

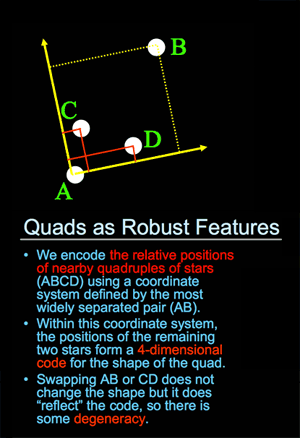

Dots are the answer. Dots, and triangles. The Astrometry project (which by the way is on Flickr) uses sets of four points to make a “feature”, while I went for three with the addition of a set of inter-triangle relationships that have to be satisfied:

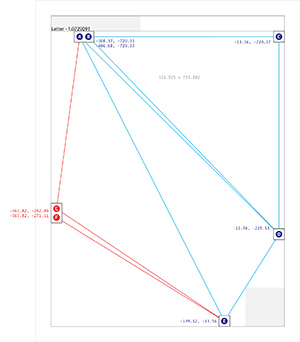

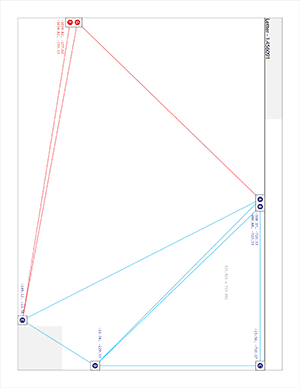

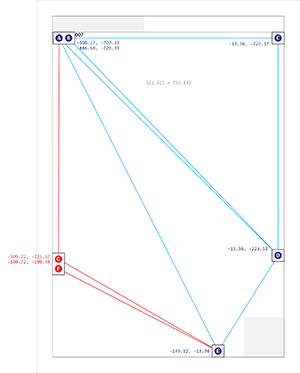

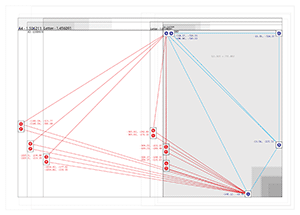

That’s an image of all six size/orientation combinations supported by Walking Papers, and the placement of dots on each. The five blue dots to the top and right maintain their relative positions regardless of print style and act as a kind of signature that this is in fact a print from Walking Papers. The remaining two red dots differ substantially based on the size of the paper and the print orientation, making it possible to guess page size more reliably than with the current SIFT-based aspect ratio method. The entire arrangement is only “ratified” by a successful reading of the QR code in the lower right corner.

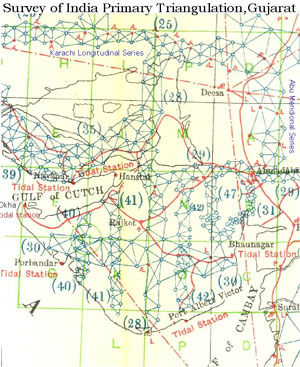

This triangle traversal put me mind of the Great Trigonometrical Survey of India, an early 19th century mapping effort by the British led by George Everest:

Finally, in 1830 Everest returned to India. He started by triangulating from Dehra Dun to Sironj, a distance of 400 miles across the plains of northern India. The land was so flat that Everest had to design and construct 50 foot high masonry towers on which to mount his theodolites. Sometimes the air was too hazy to make measurements during the day so Everest had the idea of using powerful lanterns, which were visible from 30 miles away, for surveying by night. … The survey’s line of triangles up the spine of India covered an area of 56,997 square miles, from Cape Comorin in the south to the Himalayas in the north.

The process of finding these dots starts with an image like this:

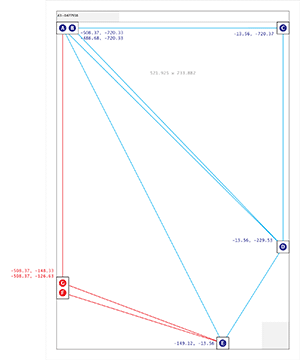

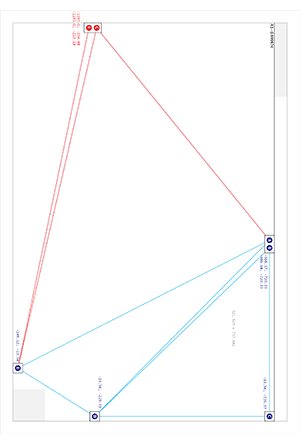

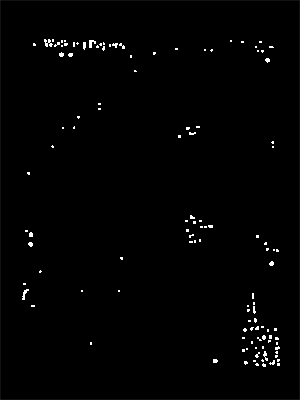

…and moves on to an intermediate step that looks for all localized dark blobs using a pair of spatial filters:

Once we have a collection of candidate dots, the problem looks quite similar to that faced by Astrometry: looking for likely features based on similar triangles, starting with the three blue dots in the upper right, and walking across the page to find the rest.

If you’re inclined to help test this new thing, I’ve installed a version of the cameraphone code branch at camphone.walkingpapers.org. When I’m satisfied that it works as well as the current Walking Papers, I’ll replace the current print composition and scan decoding components with the new version, and helpfully we’ll start to see even more scans and photos submitted.

Thank you!

Comments (4)

Glad we could be of help! Keep us in the loop as things proceed. Geometric hashing is a pretty valuable tool.

Posted by David W. Hogg on Tuesday, March 29 2011 11:19am UTC

This is a really slick way to do it. Have you tested how the geometric hashing handles keystoning if the photographs aren't orthogonal? I'm guessing that photographs of stars are geometrically consistent.

Posted by Marc Pfister on Tuesday, March 29 2011 2:29pm UTC

Thanks Marc, it's definitely been something of an improvement over the old regime. One of the reasons I was looking for test input from a lot of different people was to determine how much variation we might see when people liberally interpreted the call for photographs. We deal with keystoning by implementing a small amount of tolerance for error in the triangle shapes, so that even if a photo is taken off-angle the output will be discernible. Right now the main problem I'm seeing with current tests is that some of the dark parts of the "Walking Papers" text in the upper left are being confused with the nearby dot pair on portrait views. The output is skewed enough to be weird, but not so skewed that the QR code can't be read. I may change the appearance of the WP logo to account for this.

Posted by Michal Migurski on Tuesday, March 29 2011 5:29pm UTC

David, your project is an inspiration!

Posted by Michal Migurski on Tuesday, March 29 2011 5:29pm UTC

Sorry, no new comments on old posts.